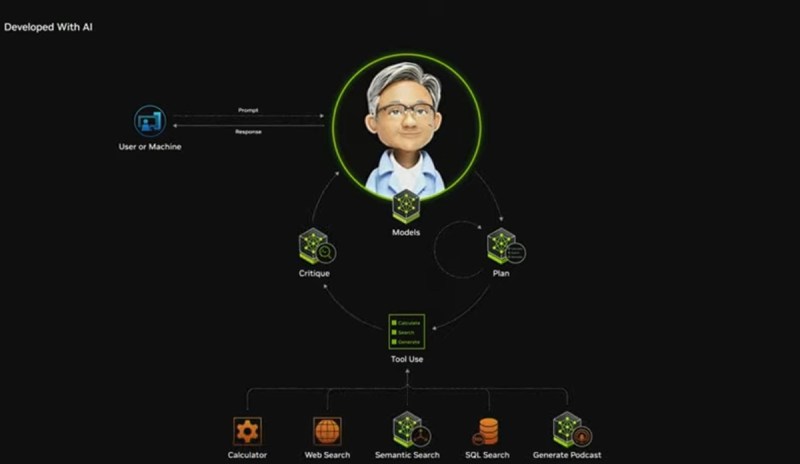

In the future, we will all manage our own AI agents

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Jensen Huang, CEO of Nvidia, gave an eye-opening keynote talk at CES 2025 last week. It was highly appropriate, as Huang’s favorite subject of artificial intelligence has exploded across the world and Nvidia has, by extension, become one of the most valuable companies in the world. Apple recently passed Nvidia with a market capitalization of $3.58 trillion, compared to Nvidia’s $3.33 trillion. The company is celebrating the 25th year of its GeForce graphics chip business and it has been a long time since I did the first interview with Huang back in 1996, when we talked about graphics chips for a “Windows accelerator.” Back then, Nvidia was one of 80 3D graphics chip makers. Now it’s one of around three or so survivors. And it has made a huge pivot from graphics to AI. Huang hasn’t changed much. For the keynote, Huang announced a video game graphics card, the Nvidia GeForce RTX 50 Series, but there were a dozen AI-focused announcements about how Nvidia is creating the blueprints and platforms to make it easy to train robots for the physical world. In fact, in a feature dubbed DLSS 4, Nvidia is now using AI to make its graphics chip frame rates better. And there are technologies like Cosmos, which helps robot developers use synthetic data to train their robots. A few of these Nvidia announcements were among my 13 favorite things at CES. After the keynote, Huang held a free-wheeling Q&A with the press at the Fountainbleau hotel in Las Vegas. At first, he engaged with a hilarious discussion with the audio-visual team in the room about the sound quality, as he couldn’t hear questions up on stage. So he came down among the press and, after teasing the AV team guy named Sebastian, he answered all of our questions, and he even took a selfie with me. Then he took a bunch of questions from financial analysts. I was struck at how technical Huang’s command of AI was during the keynote, but it reminded me more of a Siggraph technology conference than a keynote speech for consumers at CES. I asked him about that and you can see his answer below. I’ve included the whole Q&A from all of the press in the room. Here’s an edited transcript of the press Q&A. Jensen Huang, CEO of Nvidia, at CES 2025 press Q&A. Question: Last year you defined a new unit of compute, the data center. Starting with the building and working down. You’ve done everything all the way up to the system now. Is it time for Nvidia to start thinking about infrastructure, power, and the rest of the pieces that go into that system? Jensen Huang: As a rule, Nvidia–we only work on things that other people do not, or that we can do singularly better. That’s why we’re not in that many businesses. The reason why we do what we do, if we didn’t build NVLink72, who would have? Who could have? If we didn’t build the type of switches like Spectrum-X, this ethernet switch that has the benefits of InfiniBand, who could have? Who would have? We want our company to be relatively small. We’re only 30-some-odd thousand people. We’re still a small company. We want to make sure our resources are highly focused on areas where we can make a unique contribution. We work up and down the supply chain now. We work with power delivery and power conditioning, the people who are doing that, cooling and so on. We try to work up and down the supply chain to get people ready for these AI solutions that are coming. Hyperscale was about 10 kilowatts per rack. Hopper is 40 to 50 to 60 kilowatts per rack. Now Blackwell is about 120 kilowatts per rack. My sense is that that will continue to go up. We want it to go up because power density is a good thing. We’d rather have computers that are dense and close by than computers that are disaggregated and spread out all over the place. Density is good. We’re going to see that power density go up. We’ll do a lot better cooling inside and outside the data center, much more sustainable. There’s a whole bunch of work to be done. We try not to do things that we don’t have to. HP EliteBook Ultra G1i 14-inch notebook next-gen AI PC. Question: You made a lot of announcements about AI PCs last night. Adoption of those hasn’t taken off yet. What’s holding that back? Do you think Nvidia can help change that? Huang: AI started the cloud and was created for the cloud. If you look at all of Nvidia’s growth in the last several years, it’s been the cloud, because it takes AI supercomputers to train the models. These models are fairly large. It’s easy to deploy them in the cloud. They’re called endpoints, as you know. We think that there are still designers, software engineers, creatives, and enthusiasts who’d like to use their PCs for all these things. One challenge is that because AI is in the cloud, and there’s so much energy and movement in the cloud, there are still very few people developing AI for Windows. It turns out that the Windows PC is perfectly adapted to AI. There’s this thing called WSL2. WSL2 is a virtual machine, a second operating system, Linux-based, that sits inside Windows. WSL2 was created to be essentially cloud-native. It supports Docker containers. It has perfect support for CUDA. We’re going to take the AI technology we’re creating for the cloud and now, by making sure that WSL2 can support it, we can bring the cloud down to the PC. I think that’s the right answer. I’m excited about it. All the PC OEMs are excited about it. We’ll get all these PCs ready with Windows and WSL2. All the energy

In the future, we will all manage our own AI agents Read More »