Anthropic’s fastest model, Claude 3.5 Haiku, now generally available

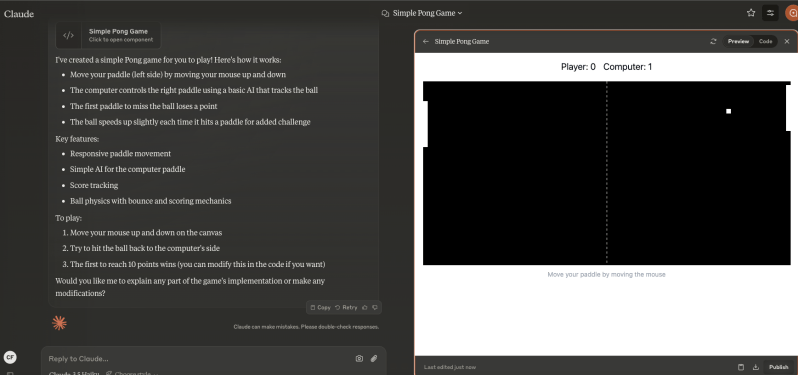

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Anthropic has officially rolled out its Claude 3.5 Haiku model to all users through the Claude chatbot on the web and mobile apps, as sighted by AI power users on X. Previously limited to developers accessing it via Anthropic’s API following its launch in October 2024, this smaller, faster model has garnered attention for its ability to outperform larger models on key benchmarks while maintaining a competitive price point. According to the third-party benchmarking organization Artificial Analysis, Claude 3.5 Haiku “has a lower latency compared to average, taking 0.80s to receive the first token (TTFT),” yet “is slower compared to average, with a output speed of 65.1 tokens per second.” The release — which hasn’t been officially announced — comes on the heels of major updates from Anthropic’s AI rivals OpenAI and Google, which have also shipped new models to general availability in their chatbots as the year winds down, namely OpenAI’s o1 and o1-mini models and Google’s Gemini 2. The question for Anthropic is whether customers will be impressed enough with Claude 3.5 Haiku’s performance to sign up for its Pro tier — or to continue using it instead of some of these other advanced and fast rivals. Claude 3.5 Haiku is accessible through the Claude Chatbot As the fastest and most cost-effective model in Anthropic’s lineup, Claude 3.5 Haiku excels in real-time tasks such as processing large datasets, analyzing financial documents, and generating outputs from long-context information. It features a 200,000-token context window — more than the 128,000-token window on OpenAI’s GPT-4 and GPT-4o — allowing it to handle extensive input with ease. On the Claude chatbot, Haiku brings functionality that enhances its versatility. Users can analyze images and file attachments, making it useful for multimedia tasks and workflows involving large document sets. Haiku also integrates with Claude Artifacts, the interactive sidebar first introduced in June 2024. Artifacts provides a dedicated workspace for manipulating and refining AI-generated content in real time, including running full apps. In my test of Artifacts with Haiku this morning, it was able to code a fully playable version of Pong in less than a minute: Despite its strengths, Haiku has limitations. It does not currently support web browsing or image generation, both of which are offered by competitors like OpenAI’s GPT-4o and GPT-4. Additionally, my brief test of it this morning showed it failed on the “Strawberry Test,” a common user-designed challenge in which an AI must identify all three R’s in the word strawberry. Access and subscription details Claude 3.5 Haiku is freely accessible via the Claude chatbot, but users face a variable daily message limit depending on server demand. For example, on the free tier this morning when I tried it out, I was able to perform approximately 10 exchanges (20 total messages in and out) before reaching Anthropic’s quota, which resets daily. To unlock more extensive usage, users can subscribe to the Claude Pro plan, priced at $20 per month. This subscription provides up to five times the free tier’s usage, priority access during high-traffic periods, early access to new features, and access to additional models like Claude 3 Opus. The pricing structure mirrors OpenAI’s ChatGPT Plus subscription, offering a premium experience for power users. Performance and cost On the API, Claude 3.5 Haiku offers exceptional performance at an affordable price. Starting at $0.80 per million input tokens and $4 per million output tokens, it provides an economical solution compared to larger models like Claude 3 Opus. Developers can reduce costs further using prompt caching, which offers up to 90% savings, and the Message Batches API, which cuts costs by 50%. In benchmark testing, Haiku has surpassed many larger, publicly available models. Its performance includes a 40.6% score on SWE-bench Verified, a key coding benchmark, demonstrating its strength in tasks requiring intelligence and speed. This makes Haiku an excellent choice for user-facing applications and time-sensitive workflows. Key considerations While Claude 3.5 Haiku delivers strong capabilities, potential users should consider its current limitations. The lack of web browsing and image generation may make it less appealing for certain use cases compared to competitors. Furthermore, the daily message cap may be inconvenient for users who don’t wish to upgrade to the Claude Pro subscription. However, with features like image and file analysis, robust coding capabilities, and integration with Artifacts, Haiku remains a powerful tool for tasks requiring speed and precision. The Artifacts feature, in particular, extends its functionality beyond text generation, enabling collaborative editing and real-time content refinement. For users ready to explore its potential, Claude 3.5 Haiku is now live and available through the Claude chatbot on web and mobile apps on iOS and Android. source

Anthropic’s fastest model, Claude 3.5 Haiku, now generally available Read More »