OpenAI rolls out ChatGPT for iPhone in landmark AI integration with Apple

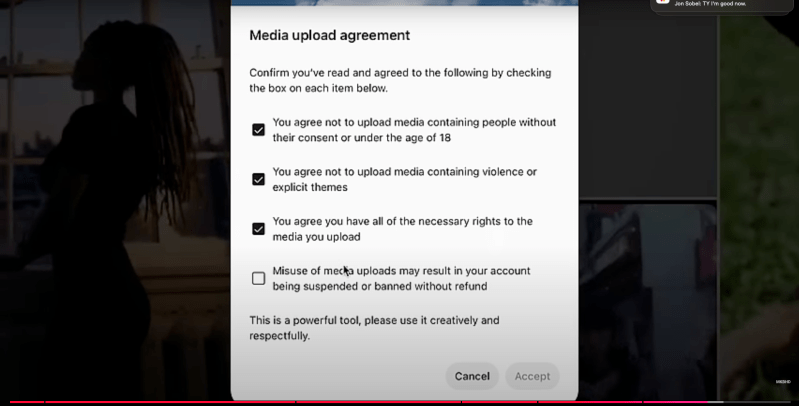

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More OpenAI demonstrated its new iPhone integration on Wednesday as iOS 18.2 rolled out to users, bringing ChatGPT directly into Siri, writing tools and camera features. The feature update, shown off on day five of OpenAI’s “12 Days of Shipmas” product launches, marks a rare opening of Apple’s core iPhone features to outside software. ChatGPT can now process commands through Siri and handle tasks across the operating system. “When Siri thinks that it would be helped by giving a task over to ChatGPT, it can just hand it off,” Dave Cummings, engineering manager for ChatGPT at OpenAI, explained during Wednesday’s demonstration. The system works through three main paths: Siri voice commands, Writing Tools for text editing and Visual Intelligence through the Camera Control button. Users can access basic ChatGPT features without an account, although premium capabilities require a subscription. Inside Apple’s AI strategy: Why the iPhone maker chose OpenAI instead of building its own The partnership addresses critical challenges for both companies. Apple, despite its $3 trillion market capitalization, has struggled to match competitors in AI development. Google’s Gemini and Anthropic’s Claude have demonstrated capabilities that surpass anything in Apple’s current AI portfolio. “We really want to make ChatGPT as frictionless and easy to use everywhere,” Sam Altman, CEO of OpenAI, said during Wednesday’s press conference. “We love Apple devices, and so this integration is one that we’re very, very proud of.” The timing of this release could boost Apple’s high-end device sales at a crucial moment. While the company doesn’t break out AI-specific revenue, limiting these features to iPhone 15 Pro models and newer devices creates a compelling reason for consumers to upgrade. This strategy mirrors Apple’s previous pattern of using advanced features — like ProRAW photography or ProRes video — to drive adoption of its premium devices, which carry margins estimated at over 60%. The move also positions Apple differently in the AI race. Rather than competing head-on with Google and Microsoft in building foundational AI models, Apple is leveraging partnerships to bring AI to its ecosystem while maintaining its focus on hardware and user experience. This approach could prove more profitable in the short term, as AI model training remains enormously expensive with uncertain returns. The $5 billion question: How OpenAI plans to monetize its million-user iPhone base For OpenAI, the partnership provides immediate access to Apple’s installed base of more than one billion iPhone users. This comes at a crucial time for the AI company, which is under pressure to generate revenue while managing massive computing costs. Recent reports indicate OpenAI’s computing expenses could reach $5 billion annually by 2025. The partnership also arrives amid OpenAI’s broader monetization push. The company recently announced a partnership with defense contractor Anduril and launched a $200-per-month ChatGPT Pro tier. OpenAI’s CFO Sarah Friar has indicated the company is exploring advertising revenue streams. Corporate AI spending could shift as ChatGPT comes to enterprise iPhones For enterprise users, this integration represents more than just a new iPhone feature. Many companies have invested heavily in standalone AI solutions, often paying for multiple services like Jasper, Claude or corporate ChatGPT licenses. Native iPhone AI integration could consolidate these tools, potentially reshaping how businesses approach mobile productivity. Companies might shift their enterprise software budgets from specialized AI applications to platforms that integrate seamlessly with Apple’s ecosystem. The integration could also reshape the competitive landscape. Google, which pays Apple billions annually to remain the iPhone’s default search engine, may need to reassess its mobile strategy. The search giant has already accelerated its AI efforts, recently launching Gemini across its products. Apple’s privacy-first reputation influenced the integration’s design. The system requires explicit user permission before sharing data with ChatGPT, and anonymous usage options preserve user privacy. All processing occurs on-device for basic features, with more advanced capabilities requiring cloud computation. The future of mobile AI: A new platform war begins The partnership highlights a broader shift in computing, where AI capabilities become as fundamental as operating systems themselves. We’re seeing the emergence of a new platform war, but unlike the mobile OS battles of the 2000s, this one centers on AI integration. The stakes are much higher: Whoever controls the AI interface likely controls the primary way users will interact with technology for years to come. Apple’s choice to partner rather than compete suggests they’ve learned from history — sometimes being the platform that hosts the best services is more valuable than trying to build everything in-house. OpenAI has more announcements planned as part of its “12 Days of Shipmas” campaign. But the Apple partnership may prove the most consequential, reshaping how a billion users interact with AI technology daily. Neither company is exchanging cash payments in the initial partnership, with Apple viewing the massive distribution potential of its devices as compensation enough for OpenAI. However, future revenue-sharing agreements are being explored, particularly around ChatGPT’s premium subscriptions. For OpenAI, the deal offers something potentially priceless: Seamless access to hundreds of millions of Apple devices. For Apple, it’s a strategic play that keeps the company competitive in AI while maintaining flexibility to partner with other providers like Google and Anthropic — suggesting that in the emerging AI platform wars, Apple is positioning itself not as a combatant, but as the battlefield itself. source

OpenAI rolls out ChatGPT for iPhone in landmark AI integration with Apple Read More »