IP Copilot wants to use AI to turn your Slack messages into patents

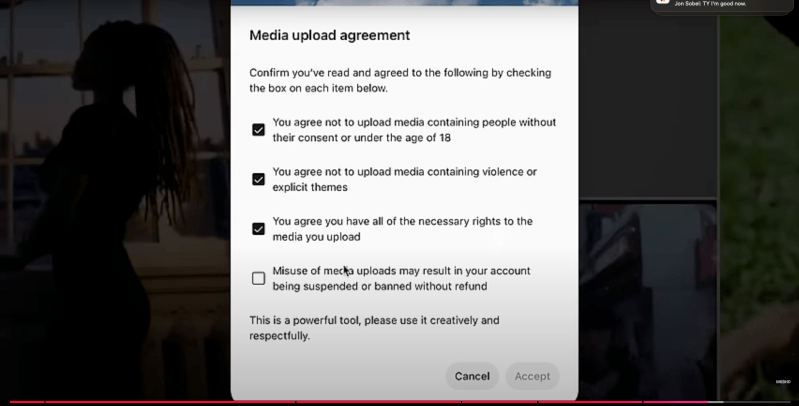

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More IP Copilot, a startup using artificial intelligence to modernize intellectual property management, announced today it has raised $4.2 million in seed funding led by Salesforce Ventures and Preface Ventures, with participation from NextGen Ventures and Notation. The San Francisco-based company, founded by AI experts with over 1,000 patents between them, aims to streamline how enterprises discover and protect innovative ideas by analyzing internal communications and documents in real-time. “Everyone is an inventor,” said Austin Walters, CEO of IP Copilot, in an exclusive interview with VentureBeat. “Engineers are busier than ever and our goal is to minimize friction between ideas and patents, helping more innovators become inventors.” Unlike other AI tools focused on patent drafting, IP Copilot emphasizes early discovery by integrating with platforms like Slack and Jira to identify potentially patentable ideas as they emerge in everyday work conversations. How AI supercharges IP legal teams’ workflow “At a large company, one IP counsel might be responsible for 10,000 employees. You can’t possibly read all the Slacks available to you every day, all your Jira tickets, and all the confluence pages that change,” explained Jason Harrier, who recently joined as founder and general counsel after serving as Head of IP at Plaid. “Our tool gives patent teams the superpowers to actually read everything available to them and automatically categorize the best patent candidates.” The company’s approach combines traditional machine learning with large language models, prioritizing accuracy over pure automation. “About 60% is traditional machine learning,” said Harrier. “We use what I think is the best AI for what it does well, and then use large language models where they work really well.” To address privacy concerns, the system only monitors public channels and can be deployed within an enterprise’s own cloud environment. “Everything is a first-party system with us,” Walters emphasized. “We’re not sending communications to a third party.” Enterprise IP management faces AI transformation The funding comes at a critical time for enterprise IP management. As AI innovation accelerates, companies are struggling to identify and protect intellectual property effectively. While most AI startups in the space focus on automating patent drafting, IP Copilot’s emphasis on early discovery could reshape how companies build their patent portfolios. The startup’s roadmap suggests broader ambitions. Plans include expanding into trade secret management and introducing natural language interfaces for portfolio analysis. These moves could position IP Copilot to become a comprehensive IP intelligence platform rather than just another legal tech tool. But perhaps the company’s most striking innovation isn’t technological – it’s philosophical. In a landscape crowded with AI companies promising to replace human expertise, IP Copilot has chosen a different path. “AI isn’t going to take your job,” says Harrier, “but an attorney that’s using AI could take your job.” For patent professionals watching the AI revolution unfold, that distinction might make all the difference. source

IP Copilot wants to use AI to turn your Slack messages into patents Read More »