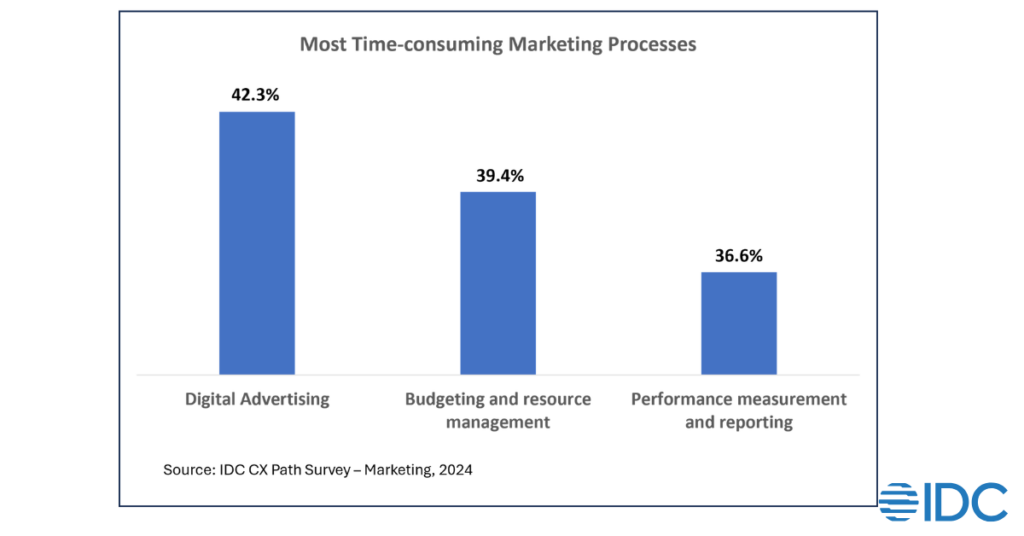

Just when businesses in Asia/Pacific thought they were getting to grips with artificial intelligence-led disruptions in their industries, here comes something new called Agentic AI and its ability to turn generative AI (GenAI) functionality and capabilities into actionable services. In practical terms, Agentic AI is more than just advanced chatbots, this is the next step in the evolution of AI, allowing AI agents to act with increased autonomy. A simplistic retail example would be, where previously the AI will recommend restaurants based on a user’s preferences, now it can book the restaurant and offer alternatives that match its users’ health and dietary needs (vegan, gluten-free, high protein, etc). From a marketing perspective, this progression in AI utilization has a drastic impact on how businesses promote their goods and services to potential customers. Previously, marketers would target their campaigns directly towards the customers but now the shortlisting and decisions are made by the AI. How does the AI choose the “right” product/service? What changes do marketing teams need to make to incorporate Agentic AI? Before we try and understand where we are going let’s first come to grips with where we are and look at the current state of marketing in Asia and how marketers are currently using AI. Some of the key goals for marketing in the region today are: Develop a more unified and enterprise-wide marketing strategy: Provide consistent marketing experiences across various touchpoints Personalization as a differentiator: Making marketing campaigns relevant to segments, micro-segments or even individuals based on their personal preferences and characteristics Streamline processes through automation Asian businesses, particularly those in China, have been relying on AI in all of these areas and some of the more common uses of AI are: Automated content creation (including visual content such as videos and images) for campaigns Predictive analytics to improve overall campaign effectiveness and performance Data analysis and insights into customer behavior trends, gauging public sentiment and achieving a better understanding of the customer journey More detailed insights of how Chinese firms are using AI in marketing can be found in IDC PeerScape: C2G Peer Insights to Augment Customer Intelligence Using Generative AI. By leveraging AI, marketers can optimize their marketing campaigns for targeted messaging to a wide range of customer segments across numerous online channels, while controlling distribution frequency to minimize advertising fatigue in a cost-effective method. For example, as shown in the below figure, digital advertising is still the most time-consuming process for marketers. Actions such as amending and formatting communications for different digital mediums as well as determining which segments to target take a significant amount of time and prolong the time required to launch a campaign – all of which can now be done by GenAI and Agentic AI. With a better understanding of how marketers in the region are using AI, let’s now look at Agentic AI and what the future holds for marketing. Agentic AI Will Impact the Marketing Workforce Composition We will see that with continued use of AI, especially in campaign cost optimization, impacts marketing workforce composition. Currently, marketers are using AI to take over mundane and repetitive tasks (e.g. formatting images across different social media platforms) which will eventually transition to taking over full-time marketing roles allowing humans to focus on more strategic initiatives. In the IDC FutureScape: Worldwide Chief Marketing Officer 2025 Predictions — Asia/Pacific (Excluding Japan) Implications, IDC lists the top most urgent trends that marketing leaders must pay attention to. One of our predictions on the impact of Agentic AI on workforces states that by 2028, 1 out of 5 marketing roles or functions will be held by an AI worker, shifting human expertise to driving strategy, creativity, ethics and managing a blended human and AI workforce. Increased Focus on AI Governance by Marketing not IT There will be a need to supervise and ensure proper performance with Agentic AI taking on more responsibilities. This will require monitoring by the marketing team themselves who know when something goes wrong as opposed to IT who monitor based on code alerts. This will require the marketing team to be trained on AI use, to make them comfortable with the use of AI and understand how it can help rather than replace them so they can truly appreciate the technology and learn the processes and systems to use in finetuning AI performance or troubleshooting errors when they occur. Marketing Workflows Will Change The use of Agentic AI by consumers will force a change in the marketing mindset, creating new processes and areas of focus which will force businesses and marketers to rethink how they operate. As an example, let’s address the question raised earlier in this blog – How does the AI choose the “right” product/service? When the Internet and search engines grew in popularity, search engine optimization was created to improve the quality and quantity of website traffic. Companies had to rethink how they setup their websites to ensure it was “visible” in rankings to the search engines. In addition to this, many paid to have their websites listed on top of searches. IDC predicts that businesses in Asia will have to work with AI companies and start spending on Large Language Model (LLM) optimization in the same manner so that businesses and their products and services are visible to Agentic AI systems. By 2029, companies will spend up to 3x more on LLM optimization than search optimization to influence GenAI systems and raise the priority & ranking of their brands. The AI road ahead has no doubt more bumps and turns and marketers must be willing to meet these changes and challenges head on. Here are a few things marketers can do to prepare for Agentic AI: Build a portfolio of AI case studies and use cases to determine what works best for you. By matching thought leadership initiatives with AI-infused case studies, marketers will be able to develop campaigns that competitively differentiate their companies and products. Work with the technology team to ensure marketing