Times Change: The Era of the Private Equity Denominator Effect

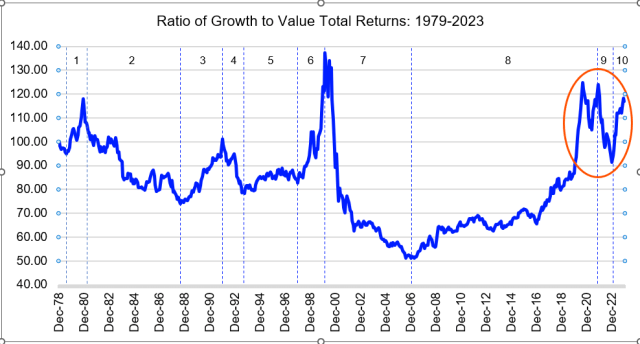

After private equity’s extraordinary performance in 2021, private market valuations decoupled from those of both public equities and bonds in 2022. This led many institutional investors to end up over-allocated to private markets. This is the so-called denominator effect, whereby private asset allocations exceed the percentage threshold established in an allocation policy and must be corrected. The simultaneous negative cash flow cycle has reduced anticipated liquidity that latent paper portfolio losses in traditional assets have already compressed. This makes portfolio adjustment decisions even more challenging. Last year’s data show that the rebound in equity prices and the pause in interest rate hikes have provided some relief, but they have not solved the private market liquidity issue or addressed the denominator effect’s implications. Liquidity needs have led to a significant increase in 2023 limited partner (LP)-led secondary sales, according to recent Lazard research. The economic paradigm may have changed and will remain uncertain. Given the potential for higher-for-longer interest rates, NAV staleness, and a negative cash flow cycle, the denominator effect may become more systematic in LP portfolios and force LPs to make more frequent allocation and liquidity decisions. So, what are some traditional strategies for addressing the denominator effect in private equities, and are there other, more innovative and efficient risk-transfer approaches available today? The Current PE Denominator Effect While 2021 was a year of extraordinary PE outperformance, 2022 was the real outlier as private markets showed unprecedented relative performance/valuation divergence from their public counterparts. A reverse divergence followed in 2023, with the highest negative return difference ever recorded, but it did not offset the current denominator effects. According to Cliffwater research, PE returned 54% in 2021, compared with 42% for public equities. The following year, PE generated 21%, outperforming stocks by 36 percentage points. In 2023, however, PE returned only 0.8% compared with 17.5% for equities. Impact of the Denominator Effect For investors building up an allocation in PE who have not yet reached their target, the denominator effect, albeit painful from the standpoint of negative performance overall, could accelerate the optimal portfolio construction process. For the (many) other investors with a near-to-optimal allocation, and a related overcommitment strategy, the emergence of the denominator effect traditionally implies the following: Consequence Negative Impact Reduced allocations to current andpossibly future vintages 1. Lower future returns2. Out-of-balance vintage diversification Smoothed compounding effect ofPE returns amid curtailed reinvestment 1. Lower returns Latent/potential negative risk premium ofthe PE portfolio since NAV staleness, which protected the downside, may limit the “upside elasticity”that accompanies any market rebound. 1. Compromised risk diversification2. Suboptimal asset allocation dynamics 3. Potential impact on future return targets Crystallization of losses 1. Lower current returns 2. Unbalanced vintage diversification Tackling the Denominator Effect Investors counter the denominator effect with various portfolio rebalancing strategies based on their specific targets, constraints, and obligations. Traditionally, they either wait or sell the assets in the secondary market. Recently introduced collateralized fund obligations (CFOs) have given investors an additional, if more complex, tool for taking on the denominator effect. 1. The Wait-and-See Strategy Investors with well-informed boards and flexible governance could rebalance their overall portfolio allocation with this technique. Often, the wait-and-see strategy involves adopting wider target allocation bands and reducing future commitments to private funds. The former make market volatility more tolerable and reduce the need for automatic, policy-driven adjustments. Of course, the wait-and-see strategy assumes that market valuations will mean revert and within a given time frame. Cash flow simulations under different scenarios and examinations of how various commitment pacing strategies can, in theory, navigate different market conditions. In practice, commitment pacing strategies are inherently rigid. Why? Because no change would be valid for stipulated commitments, legacy portfolio NAVs, and future cash flows thereof. Funding risk is a function of market risk, but private market participants have neglected this for two reasons: because of the secular abundance of liquidity and the cash flow–based valuation perspective, which has limited structural sensitivity to market risk. Internal rates of return (IRRs) and multiples can’t be compared with time-weighted traditional asset returns. Moreover, NAVs have historically carried uneven information about market risk since they are non-systematically marked to market across all funds. What does this mean? It indicates an unmeasured/implicit possibility that the existing stock of private asset investments is overvalued and that a negative risk premium could result with private asset valuations rebounding less acutely than those of public assets. According to Cliffwater commentary and analysis, data show that private equity delivered a significant negative risk premium in 2023. As of June 2022, the annual outperformance of PE vs. public stocks was worth 5.6 percentage points (11.4% – 5.8%), with excess performance of 12% and 36% for 2021 and 2022, respectively. The public markets rebounded through June 2023 by 17.5% compared with private equity’s 0.8%. As a consequence, the reported long-term trends are adjusted to 11% for PE and 6.2% for the public markets, and to 4.8% for the derived outperformance. Compared with the 17.5% of public stocks, there is a negative risk premium impact of 16.7% on the value of balance sheet assets for which long-term outperformance data do not matter. The allocation strategy is long term, but an actual PE portfolio’s valuation is not. Its true economics are a function of its actual liquidation and turnover terms. Patience may be neither mandatory nor beneficial. Whether to hold on to private assets should always be considered from the expected risk premium perspective. Notably, the consequent reduction in future commitments, associated with negative cash flow cycles, may further reduce the benefits of return compounding for private assets. 2. The Secondary Sale Strategy Investors may tap into secondary market liquidity by selling their private market stakes through LP-led secondaries, or an LP can sell its fund interests to another LP. Although this provided investors with liquidity and cash in hand, which is critical because of reduced fund distributions, in 2022, LPs could only sell their PE assets at an average of 81% of NAV, according to Jefferies. By selling in the

Times Change: The Era of the Private Equity Denominator Effect Read More »