Apple’s ELEGNT framework could make home robots feel less like machines and more like companions

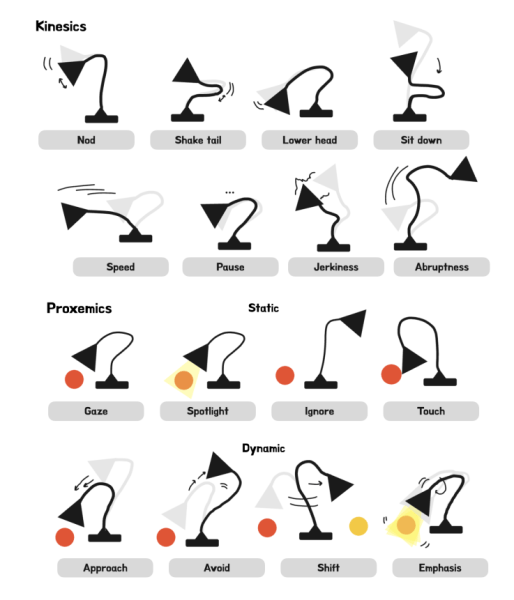

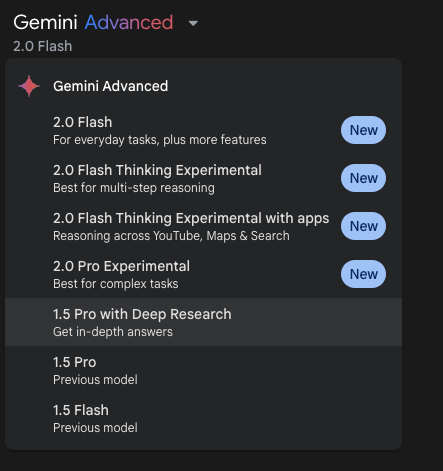

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Apple researchers have developed a new framework for making non-humanoid robots move more naturally and expressively during interactions with people, potentially paving the way for more engaging robotic assistants in homes and workplaces. The research, published this month on arXiv, introduces Expressive and Functional Movement Design ELEGNT, which allows robots to convey intentions, emotions and attitudes through their movements — rather than just completing functional tasks. “For robots to interact more naturally with humans, robot movement design should integrate expressive qualities — such as intention, attention and emotions — alongside traditional functional considerations like task fulfillment, spatial constraints and time efficiency,” the researchers from Apple’s robotics team write in their research paper. (Credit: Apple) How a desk lamp became the perfect test subject for robot emotions The study focused on a lamp-like robot, reminiscent of Pixar’s animated Luxo Jr. character, equipped with a 6-axis robotic arm and a head containing a light and projector. The researchers programmed the robot with two types of movements: purely functional ones focused on completing tasks, and more expressive movements designed to communicate the robot’s internal state. In user testing with 21 participants, the expressive movements significantly improved people’s engagement with and perception of the robot. This effect was especially pronounced during social tasks like playing music or engaging in conversation, although it was less impactful for purely functional tasks like adjusting lighting. “Without the playfulness, I might find this type of interaction with a robot annoying rather than welcome and engaging,” noted one study participant, highlighting how expressive movements made even potentially intrusive robot behaviors more acceptable. A visual guide showing the expressive movement vocabulary developed for the lamp-like robot, including basic gestures and spatial behaviors. (Credit: Apple) User testing reveals age gap in robot movement preferences The research comes as major tech companies increasingly explore home robotics. While most current home robots like robot vacuums focus purely on function, this work suggests that adding more natural, expressive movements could make future robots more appealing companions. However, the researchers note that balance is crucial. “There needs to be a balance between engagement through motion and speed completion of the task being given, otherwise the human might grow impatient,” one participant observed. The study also found that older participants were significantly less receptive to expressive robot movements, suggesting that robot behavior may need to be customized based on user preferences. The robot’s capabilities span from functional tasks like providing reading light to social interactions such as creative suggestions and playful companionship. (Credit: Apple) The future of social robotics: Finding the sweet spot between function and expression While Apple rarely discusses its robotics research publicly, this work offers intriguing hints about how the tech giant might approach future home robots. The study suggests a fundamental shift in robotics design: Instead of focusing solely on what robots can do, companies must consider how robots make people feel. The challenge ahead lies not just in programming robots to complete tasks, but in making their presence welcome in our most intimate spaces. As robots transition from factory floors to living rooms, their success may depend less on raw efficiency and more on their ability to read the room — both literally and metaphorically. Apple’s paper will be presented at the 2025 Designing Interactive Systems conference in Madeira this July. The results point to a future where robot design requires as much input from animators and behavioral psychologists as it does from engineers. As robots become more common in homes and workplaces, making them move in ways that feel natural rather than mechanical could be the difference between another forgotten gadget and a truly indispensable companion. The real test will be whether companies like Apple can translate these research insights into products that people not only use, but genuinely want to interact with. source