Open-source DeepSeek-R1 uses pure reinforcement learning to match OpenAI o1 — at 95% less cost

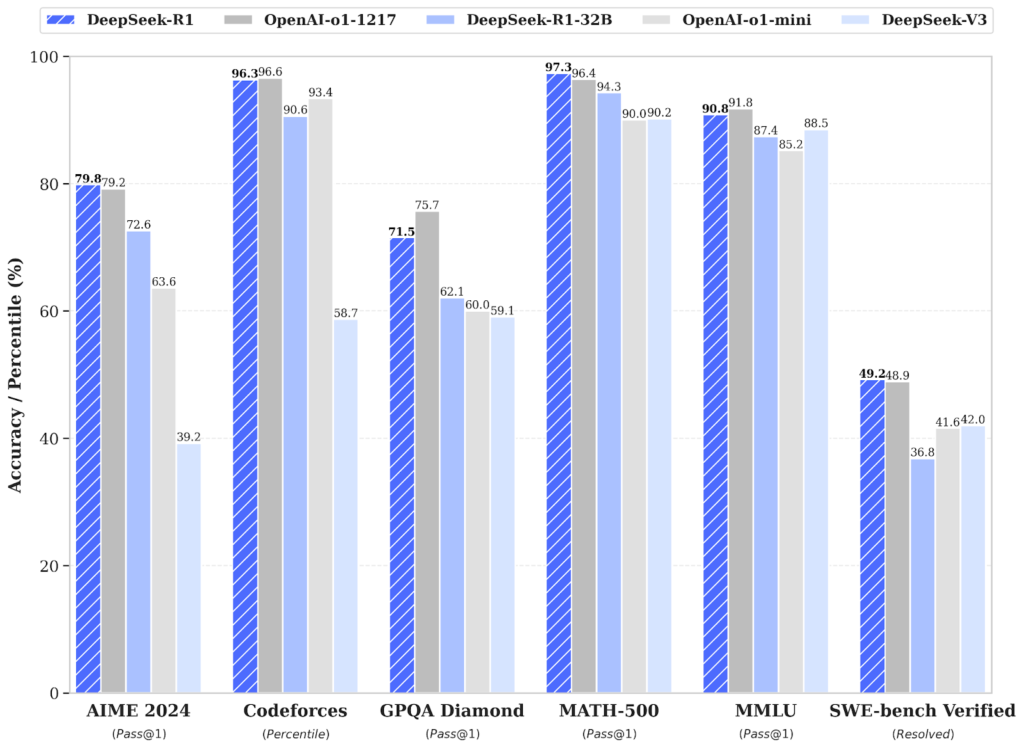

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Chinese AI startup DeepSeek, known for challenging leading AI vendors with open-source technologies, just dropped another bombshell: a new open reasoning LLM called DeepSeek-R1. Based on the recently introduced DeepSeek V3 mixture-of-experts model, DeepSeek-R1 matches the performance of o1, OpenAI’s frontier reasoning LLM, across math, coding and reasoning tasks. The best part? It does this at a much more tempting cost, proving to be 90-95% more affordable than the latter. The release marks a major leap forward in the open-source arena. It showcases that open models are further closing the gap with closed commercial models in the race to artificial general intelligence (AGI). To show the prowess of its work, DeepSeek also used R1 to distill six Llama and Qwen models, taking their performance to new levels. In one case, the distilled version of Qwen-1.5B outperformed much bigger models, GPT-4o and Claude 3.5 Sonnet, in select math benchmarks. These distilled models, along with the main R1, have been open-sourced and are available on Hugging Face under an MIT license. What does DeepSeek-R1 bring to the table? The focus is sharpening on artificial general intelligence (AGI), a level of AI that can perform intellectual tasks like humans. A lot of teams are doubling down on enhancing models’ reasoning capabilities. OpenAI made the first notable move in the domain with its o1 model, which uses a chain-of-thought reasoning process to tackle a problem. Through RL (reinforcement learning, or reward-driven optimization), o1 learns to hone its chain of thought and refine the strategies it uses — ultimately learning to recognize and correct its mistakes, or try new approaches when the current ones aren’t working. Now, continuing the work in this direction, DeepSeek has released DeepSeek-R1, which uses a combination of RL and supervised fine-tuning to handle complex reasoning tasks and match the performance of o1. When tested, DeepSeek-R1 scored 79.8% on AIME 2024 mathematics tests and 97.3% on MATH-500. It also achieved a 2,029 rating on Codeforces — better than 96.3% of human programmers. In contrast, o1-1217 scored 79.2%, 96.4% and 96.6% respectively on these benchmarks. It also demonstrated strong general knowledge, with 90.8% accuracy on MMLU, just behind o1’s 91.8%. Performance of DeepSeek-R1 vs OpenAI o1 and o1-mini The training pipeline DeepSeek-R1’s reasoning performance marks a big win for the Chinese startup in the US-dominated AI space, especially as the entire work is open-source, including how the company trained the whole thing. However, the work isn’t as straightforward as it sounds. According to the paper describing the research, DeepSeek-R1 was developed as an enhanced version of DeepSeek-R1-Zero — a breakthrough model trained solely from reinforcement learning. We are living in a timeline where a non-US company is keeping the original mission of OpenAI alive – truly open, frontier research that empowers all. It makes no sense. The most entertaining outcome is the most likely. DeepSeek-R1 not only open-sources a barrage of models but… pic.twitter.com/M7eZnEmCOY — Jim Fan (@DrJimFan) January 20, 2025 The company first used DeepSeek-V3-base as the base model, developing its reasoning capabilities without employing supervised data, essentially focusing only on its self-evolution through a pure RL-based trial-and-error process. Developed intrinsically from the work, this ability ensures the model can solve increasingly complex reasoning tasks by leveraging extended test-time computation to explore and refine its thought processes in greater depth. “During training, DeepSeek-R1-Zero naturally emerged with numerous powerful and interesting reasoning behaviors,” the researchers note in the paper. “After thousands of RL steps, DeepSeek-R1-Zero exhibits super performance on reasoning benchmarks. For instance, the pass@1 score on AIME 2024 increases from 15.6% to 71.0%, and with majority voting, the score further improves to 86.7%, matching the performance of OpenAI-o1-0912.” However, despite showing improved performance, including behaviors like reflection and exploration of alternatives, the initial model did show some problems, including poor readability and language mixing. To fix this, the company built on the work done for R1-Zero, using a multi-stage approach combining both supervised learning and reinforcement learning, and thus came up with the enhanced R1 model. “Specifically, we begin by collecting thousands of cold-start data to fine-tune the DeepSeek-V3-Base model,” the researchers explained. “Following this, we perform reasoning-oriented RL like DeepSeek-R1- Zero. Upon nearing convergence in the RL process, we create new SFT data through rejection sampling on the RL checkpoint, combined with supervised data from DeepSeek-V3 in domains such as writing, factual QA, and self-cognition, and then retrain the DeepSeek-V3-Base model. After fine-tuning with the new data, the checkpoint undergoes an additional RL process, taking into account prompts from all scenarios. After these steps, we obtained a checkpoint referred to as DeepSeek-R1, which achieves performance on par with OpenAI-o1-1217.” Far more affordable than o1 In addition to enhanced performance that nearly matches OpenAI’s o1 across benchmarks, the new DeepSeek-R1 is also very affordable. Specifically, where OpenAI o1 costs $15 per million input tokens and $60 per million output tokens, DeepSeek Reasoner, which is based on the R1 model, costs $0.55 per million input and $2.19 per million output tokens. Sooo @deepseek_ai's reasoner model, which sits somewhere between o1-mini & o1 is about 90-95% cheaper 👀 https://t.co/ohnI6dtPRC pic.twitter.com/Qn78yIGUtt — Emad (@EMostaque) January 20, 2025 The model can be tested as “DeepThink” on the DeepSeek chat platform, which is similar to ChatGPT. Interested users can access the model weights and code repository via Hugging Face, under an MIT license, or can go with the API for direct integration. source