Here’s what AI-powered startups need to succeed in 2025

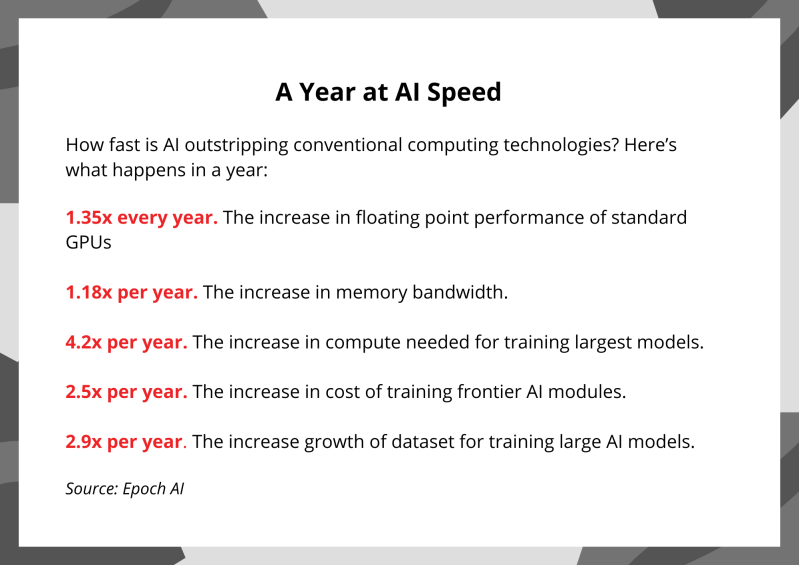

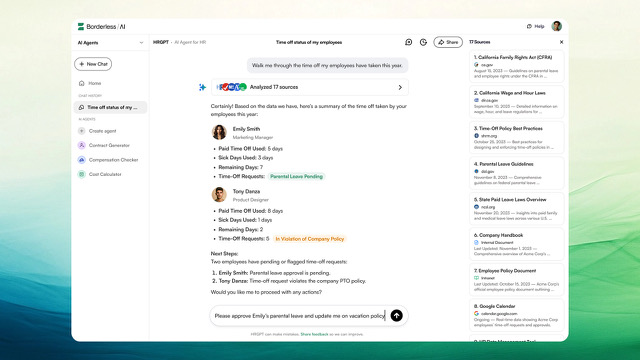

Presented by Twilio In 2024, thousands of startups emerged built on the powerful capabilities of cutting-edge large language models (LLMs). Statistically speaking, only one-fifth will survive to the end of 2025. To make it beyond this year, these companies will need an edge. That said, I’ve never been more excited about the potential of a new tech sector. AI-powered startups will remake our world in ways we can’t imagine yet — if they have the ingredients to succeed. Serving as a judge for Twilio’s Startup Searchlight 2.0 competition, which celebrates the builders creating the future of communications and customer engagement, drove this point home for me. We selected 12 honorees from among the more than 500 companies that applied. All of the winners embody a few basic principles that startups need to remember when building AI-powered solutions. Keep these in mind, and you will have a good start on building an AI business that will last. 1. Focus on business basics You can’t count on AI alone to give you a competitive advantage — it’s too ubiquitous. The challenges of starting and running a startup remain much as they were before LLMs came along. You need to attract, convert and retain customers. You need to keep costs under control: While AI is getting cheaper all the time, it is still possible to run up the tab if you create complicated workflows (and take note that 72% of IT and financial leaders say AI costs are becoming “unmanageable”). Of course, you also need to establish and defend a sustainable competitive advantage. AI’s power can be your advantage too, if you can take something that is currently complicated to do and encapsulate it in an easy-to-use API framework. That’s what Twilio did for telephony a decade ago, and it’s what the big AI models are doing today. If you want to build a sustainable tech business today, think about how you can deliver it via an API. 2. Build more than a wrapper If you’re just creating a “wrapper” for existing LLMs, you won’t be able to maintain differentiation over the long haul. For example, if you’re trying to create a tool to help create code, do speech transcription or scan PDFs and extract information, it doesn’t matter how nice your interface is — the major LLMs are already excellent at these tasks. Focus on an area where you can provide a differentiated service that gives you a compounding advantage through a data flywheel or network effects. For example, one of the AI Startup Searchlight honorees, Goodcall, automates voice calls for businesses. It has been amassing anonymized data from over 4 million customer calls to build a more robust database and improved analytics. Another area startups could focus on is pulling data out of unstructured customer conversations. One Searchlight honoree, Spoke AI, does this by pulling data from customers’ voice calls so that business users can see who is calling them, what they might want, how they are feeling and what they talked about previously with colleagues. 3. Understand the growth trajectory of AI AI is changing incredibly fast. The number of AI patents per year has increased 31x since 2010, with over 62,000 granted in 2022. When deciding where to focus your efforts, first learn about the arc of LLM development and where it’s likely to go in the next 12 months. If you don’t, your solution may be obsolete before you can get it to market. For example, the big AI labs are currently working to enhance the reasoning capabilities of these models, improving their capabilities in various complex domains. Don’t focus on advanced reasoning unless you have billions in funding! By contrast, one of the Searchlight honorees, CuraJOY, is a grassroots tech nonprofit that uses AI and entertainment to improve the accessibility, effectiveness and equity of social and mental health support. That’s definitely not an area of focus for the big AI models — but it’s meeting a major societal need. 4. Capture the excitement New AI solutions attract a lot of interest, but the excitement is fleeting. If you don’t have a plan to capture those tire-kickers and turn them into long-term customers, your business will fade quickly along with the hype. You need to maintain a high interest level. One way to do that is to keep improving your product based on customers’ input. For example, you might use AI to capture and sort customer feedback and route the highest-value feature requests directly to your product team. That will keep customers coming back and fuel sustainable growth. Another way is to keep raising the bar with new capabilities, certifications and customer-friendly offers. Here’s an illustration from a Searchlight honoree: Alpharun is an AI-powered phone interview platform; it was part of the OpenAI accelerator this year and won the audience award at the 2024 Staffing Industry Analysts conference. The company wasn’t content to rest on its laurels: It’s already securing key technology certifications and offering its customers uptime guarantees, international support and top-notch reliability — essential offerings for the enterprise customers it’s targeting. Looking forward to an AI-powered economy While 2024 marked the year of AI experimentation, 2025 will be defined by AI-powered startups delivering measurable business impact. Through my work at Lightspeed and experience judging Twilio’s Searchlight competition, one thing is clear: The most promising companies aren’t just creating clever AI implementations — they’re building robust businesses that can weather the inevitable changes in technology. The AI Searchlight honorees exemplify this approach, building true competitive moats with compounding advantages. These companies show us that lasting success comes from combining AI capabilities with deep domain expertise and strong business fundamentals. We’re at the dawn of a new tech boom, and I have no doubt that some of today’s builders will emerge as tomorrow’s tech giants. Learn more about the Twilio AI Startup Searchlight and the honorees here. Nnamdi Iregbulem is an investment partner at Lightspeed Venture Partners. Sponsored articles are content produced by a company that is

Here’s what AI-powered startups need to succeed in 2025 Read More »