Anthropic’s Computer Use mode shows strengths and limitations in new study

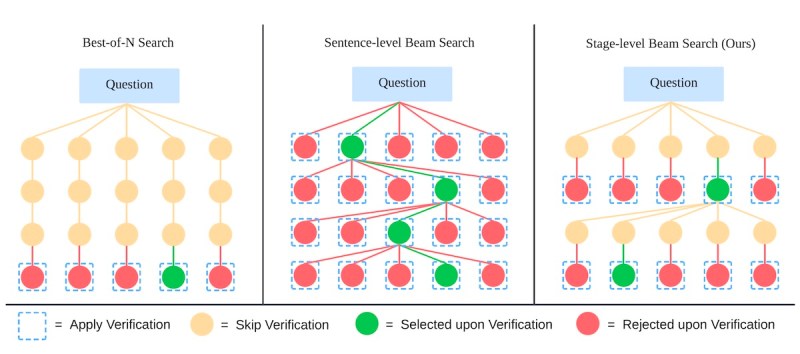

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Since Anthropic released the “Computer Use” feature for Claude in October, there has been a lot of excitement about what AI agents can do when given the power to imitate human interactions. A new study by Show Lab at the National University of Singapore provides an overview of what we can expect from the current generation of graphical user interface (GUI) agents. Claude is the first frontier model that can interact as a GUI agent with a device through the same interfaces humans use. The model only accesses desktop screenshots and interacts by triggering keyboard and mouse actions. The feature promises to enable users to automate tasks through simple instructions and without the need to have API access to applications. The researchers tested Claude on a variety of tasks including web search, workflow completion, office productivity and video games. Web search tasks involve navigating and interacting with websites, such as searching for and purchasing items or subscribing to news services. Workflow tasks involve multi-application interactions, such as extracting information from a website and inserting it into a spreadsheet. Office productivity tasks test the agent’s ability to perform common operations such as formatting documents, sending emails and creating presentations. The video game tasks evaluate the agent’s ability to perform multi-step tasks that require understanding the logic of the game and planning actions. Each task tests the model’s ability across three dimensions: planning, action and critic. First, the model must come up with a coherent plan to accomplish the task. It must then be able to carry out the plan by translating each step into an action, such as opening a browser, clicking on elements and typing text. Finally, the critic element determines whether the model can evaluate its progress and success in accomplishing the task. The model should be able to understand if it has made errors along the way and correct course. And if the task is not possible, it should give a logical explanation. The researchers created a framework based on these three components and reviewed and rated all tests by humans. In general, Claude did a great job of carrying out complex tasks. It was able to reason and plan multiple steps needed to carry out a task, perform the actions and evaluate its progress every step of the way. It can also coordinate between different applications such as copying information from web pages and pasting them in spreadsheets. Moreover, in some cases, it revisits the results at the end of the task to make sure everything is aligned with the goal. The model’s reasoning trace shows that it has a general understanding of how different tools and applications work and can coordinate them effectively. However, it also tends to make trivial mistakes that average human users would easily avoid. For example, in one task, the model failed to complete a subscription because it did not scroll down a webpage to find the corresponding button. In other cases, it failed at very simple and clear tasks, such as selecting and replacing text or changing bullet points to numbers. Moreover, the model either didn’t realize its error or made wrong assumptions about why it was not able to achieve the desired goal. According to the researchers, the model’s misjudgments of its progress highlight “a shortfall in the model’s self-assessment mechanisms” and suggest that “a complete solution to this still may require improvements to the GUI agent framework, such as an internalized strict critic module.” From the results, it is also clear that GUI agents can’t replicate all the basic nuances of how humans use computers. What does it mean for enterprises? The promise of using basic text descriptions to automate tasks is very appealing. But at least for now, the technology is not ready for mass deployment. The behavior of the models is unstable and can lead to unpredictable results, which can have damaging consequences in sensitive applications. Performing actions through interfaces designed for humans is also not the fastest way to accomplish tasks that can be done through APIs. And we have yet much to learn about the security risks of giving large language models (LLMs) control of the mouse and keyboard. For example, a study shows that web agents can easily fall victim to adversarial attacks that humans would easily ignore. Automating tasks at scale still requires robust infrastructure, including APIs and microservices that can be connected securely and served at scale. However, tools like Claude Computer Use can help product teams explore ideas and iterate over different solutions to a problem without investing time and money in developing new features or services to automate tasks. Once a viable solution is discovered, the team can focus on developing the code and components needed to deliver it efficiently and reliably. source

Anthropic’s Computer Use mode shows strengths and limitations in new study Read More »