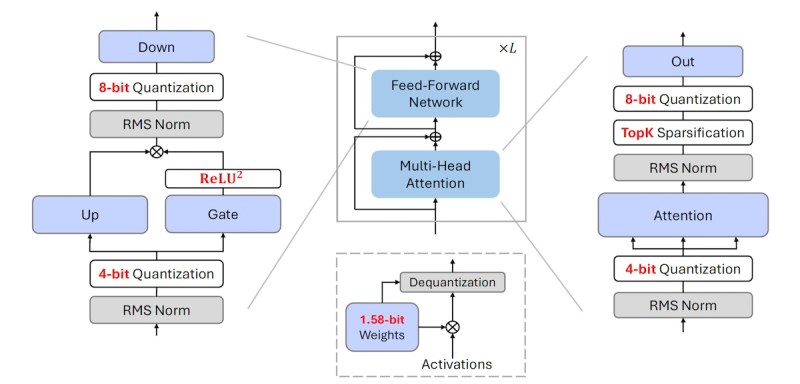

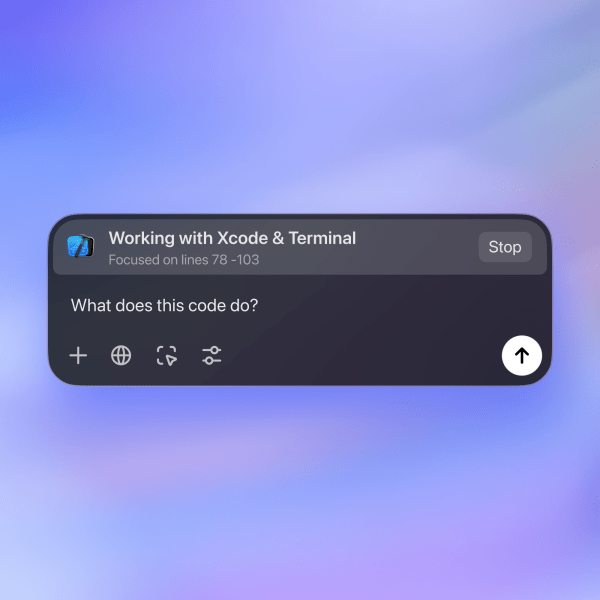

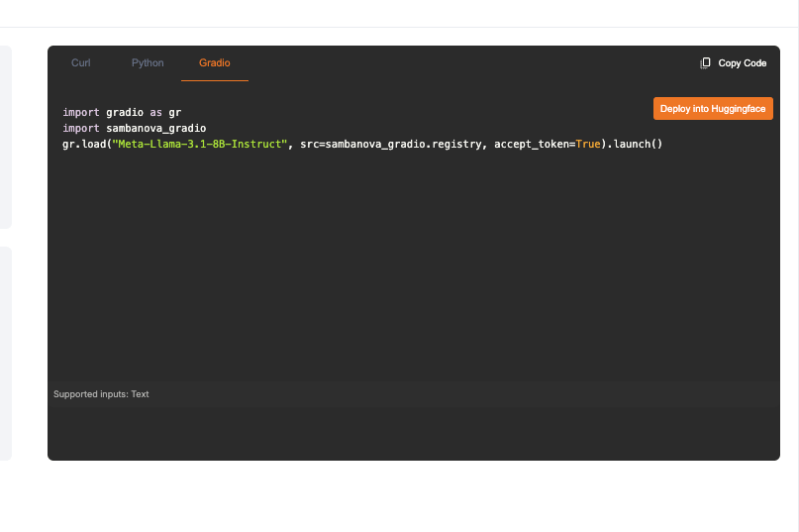

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Microsoft has launched a new suite of specialized AI models designed to address specific challenges in manufacturing, agriculture, and financial services. In collaboration with partners such as Siemens, Bayer, Rockwell Automation, and others, the tech giant is aiming to bring advanced AI technologies directly into the heart of industries that have long relied on traditional methods and tools. These purpose-built models—now available through Microsoft’s Azure AI catalog—represent Microsoft’s most focused effort yet to develop AI tools tailored to the unique needs of different sectors. The company’s initiative reflects a broader strategy to move beyond general-purpose AI and deliver solutions that can provide immediate operational improvements in industries like agriculture and manufacturing, which are increasingly facing pressures to innovate. “Microsoft is in a unique position to deliver the industry-specific solutions organizations need through the combination of the Microsoft Cloud, our industry expertise, and our global partner ecosystem,” Satish Thomas, Corporate Vice President of Business & Industry Solutions at Microsoft, said in a LinkedIn post announcing the new AI models. “Through these models,” he added, “we’re addressing top industry use cases, from managing regulatory compliance of financial communications to helping frontline workers with asset troubleshooting on the factory floor — ultimately, enabling organizations to adopt AI at scale across every industry and region… and much more to come in future updates!” Siemens and Microsoft remake industrial design with AI-powered software At the center of the initiative is a partnership with Siemens to integrate AI into its NX X software, a widely used platform for industrial design. Siemens’ NX X copilot uses natural language processing to allow engineers to issue commands and ask questions about complex design tasks. This feature could drastically reduce the onboarding time for new users while helping seasoned engineers complete their work faster. By embedding AI into the design process, Siemens and Microsoft are addressing a critical need in manufacturing: the ability to streamline complex tasks and reduce human error. This partnership also highlights a growing trend in enterprise technology, where companies are looking for AI solutions that can improve day-to-day operations rather than experimental or futuristic applications. Smaller, faster, smarter: How Microsoft’s compact AI models are transforming factory operations Microsoft’s new initiative relies heavily on its Phi family of small language models (SLMs), which are designed to perform specific tasks while using less computing power than larger models. This makes them ideal for industries like manufacturing, where computing resources can be limited, and where companies often need AI that can operate efficiently on factory floors. Perhaps one of the most novel uses of AI in this initiative comes from Sight Machine, a leader in manufacturing data analytics. Sight Machine’s Factory Namespace Manager addresses a long-standing but often overlooked problem: the inconsistent naming conventions used to label machines, processes, and data across different factories. This lack of standardization has made it difficult for manufacturers to analyze data across multiple sites. The Factory Namespace Manager helps by automatically translating these varied naming conventions into standardized formats, allowing manufacturers to better integrate their data and make it more actionable. While this may seem like a minor technical fix, the implications are far-reaching. Standardizing data across a global manufacturing network could unlock operational efficiencies that have been difficult to achieve. Early adopters like Swire Coca-Cola USA, which plans to use this technology to streamline its production data, likely see the potential for gains in both efficiency and decision-making. In an industry where even small improvements in process management can translate into substantial cost savings, addressing this kind of foundational issue is a crucial step toward more sophisticated data-driven operations. Smart farming gets real: Bayer’s AI model tackles modern agriculture challenges In agriculture, the Bayer E.L.Y. Crop Protection model is poised to become a key tool for farmers navigating the complexities of modern farming. Trained on thousands of real-world questions related to crop protection labels, the model provides farmers with insights into how best to apply pesticides and other crop treatments, factoring in everything from regulatory requirements to environmental conditions. This model comes at a crucial time for the agricultural industry, which is grappling with the effects of climate change, labor shortages, and the need to improve sustainability. By offering AI-driven recommendations, Bayer’s model could help farmers make more informed decisions that not only improve crop yields but also support more sustainable farming practices. The initiative also extends into the automotive and financial sectors. Cerence, which develops in-car voice assistants, will use Microsoft’s AI models to enhance in-vehicle systems. Its CaLLM Edge model allows drivers to control various car functions, such as climate control and navigation, even in settings with limited or no cloud connectivity—making the technology more reliable for drivers in remote areas. In finance, Saifr, a regulatory technology startup within Fidelity Investments, is introducing models aimed at helping financial institutions manage regulatory compliance more effectively. These AI tools can analyze broker-dealer communications to flag potential compliance risks in real-time, significantly speeding up the review process and reducing the risk of regulatory penalties. Rockwell Automation, meanwhile, is releasing the FT Optix Food & Beverage model, which helps factory workers troubleshoot equipment in real time. By providing recommendations directly on the factory floor, this AI tool can reduce downtime and help maintain production efficiency in a sector where operational disruptions can be costly. The release of these AI models marks a shift in how businesses can adopt and implement artificial intelligence. Rather than requiring companies to adapt to broad, one-size-fits-all AI systems, Microsoft’s approach allows businesses to use AI models that are custom-built to address their specific operational challenges. This addresses a major pain point for industries that have been hesitant to adopt AI due to concerns about cost, complexity, or relevance to their particular needs. The focus on practicality also reflects Microsoft’s understanding that many businesses are looking for AI tools that can deliver immediate, measurable results. In sectors like manufacturing and agriculture,