1. Artificial intelligence in daily life: Views and experiences

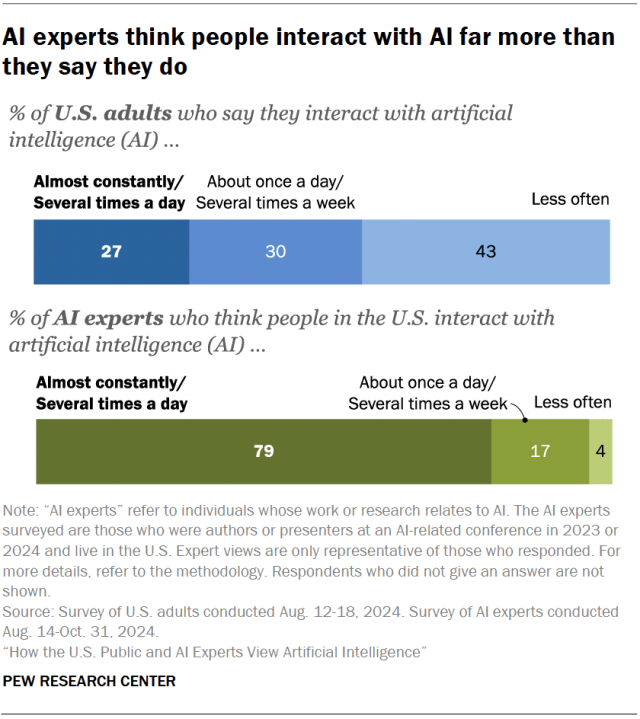

Artificial intelligence is quickly becoming more and more part of everyday life. This chapter explores how the public and experts compare in their experiences and views around the use of AI (such as chatbots) and their control over AI’s role in their lives. Interacting with AI Americans encounter AI in various ways, from social media to health care to financial services. But AI experts believe the public engages with AI more than they report. AI experts were asked how often they think people in the United States interact with AI. A vast majority (79%) say people in the U.S. interact with AI almost constantly or several times a day. A much smaller share of U.S. adults (27%) think they interact with AI at this rate. Three-in-ten say they do so about once a day or several times a week, and 43% report doing so less often. Use and views of chatbots It’s been over two years since ChatGPT was released, and other chatbots came soon after. Since then, Americans have been increasingly using them for work or entertainment. To that end, we asked AI experts and the general public about their use of these tools. Using chatbots is nearly universal among experts, but that’s not the case for the general public. One-third of U.S. adults say they have ever used an AI chatbot, compared with nearly all AI experts surveyed (98%). That said, most Americans (72%) have at least heard of chatbots, including 28% who’ve heard a lot. The public’s experiences with chatbots have not been as positive as those of experts. About six-in-ten AI experts who have used a chatbot (61%) say it was extremely or very helpful to them. Smaller shares of users in the general public (33%) say this. Fewer in both groups report that chatbots have been not too or not at all helpful. Still, U.S. adults who’ve used chatbots are more likely than experts surveyed to say these tools have been not too or not at all helpful (21% vs. 9%). Do people think they have control over AI in their lives? Debates have continued around the difficulty or inability to opt out of AI. On balance, both the American public and the AI experts we surveyed want more control over this technology. When asked about control over AI use in their lives, almost half or more in both groups say they have little or no control, with this sentiment being somewhat more prevalent among U.S. adults (59%) than AI experts surveyed (46%). Smaller shares of both groups think they have control over whether AI is used in their lives: 14% of the general public and 23% of AI experts say they have a great deal or quite a bit of control. What’s more, both U.S. adults and AI experts most commonly say they want more control over how AI is used in their lives. More than half of both AI experts and U.S. adults (57% and 55%) say they would like more control over how AI is used in their own lives. Fewer in both groups are comfortable with the amount of control they have, though experts are more likely to say this (38% vs. 19%). Uncertainty is more common among the general public. U.S. adults are far more likely than AI experts to say they are unsure how much control they want over AI (26% vs. 4%). By gender, among AI experts surveyed Among experts, women are more likely than men to say that they would like more control over AI (67% vs. 54%). By job sector, among AI experts surveyed Experts who work at colleges or universities are more likely than those who work in private companies to say they want more control over AI (61% vs. 50%). Roughly equal portions of both say they have not too much or no control in how AI is used in their lives (47% and 46%, respectively). source

1. Artificial intelligence in daily life: Views and experiences Read More »