Announcing The Forrester Wave™: Modern Application Development Services, Q1 2025

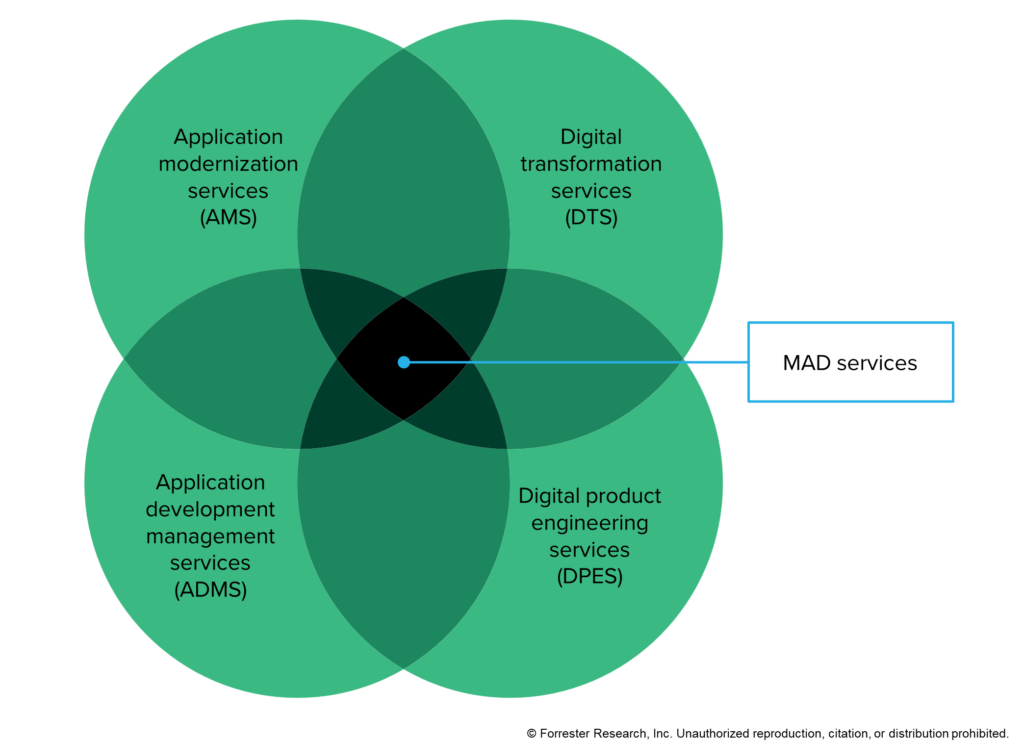

MAD Services Deliver Cool New Products While Transforming Your Development Capabilities Modern application development (MAD) services represent the next wave in custom application development services. Emerging from the convergence of current and past services such as application development management services (ADMS), digital transformation services (DTS), digital product engineering services (DPES), and broader application modernization services (AMS), MAD services are now utilized by leading organizations, with an increasing number of CIOs showing strong interest in these offerings (see figure below). What sets MAD services apart? It’s their unique ability to not only support clients in delivering modern apps using the latest technologies and development practices but also their role in transforming and modernizing their custom development capabilities. The Venn diagram illustrates the context for MAD services and their foundational services, though it doesn’t capture the market’s multibillion-dollar scale, which is expected to grow. We just published The Forrester Wave™: Modern Application Development Services, Q1 2025, which analyzes and compares 13 medium and large market players out of more than 50 providers that offer MAD services: Accenture, Capgemini, CI&T, Cognizant, EPAM, Globant, HCLTech, Infosys, LTIMindtree, NTT DATA, Softtek, Tata Consultancy Services, and Thoughtworks. Why These Players And Not Others? The MAD services market is highly competitive, and Forrester clients can learn more about the broader landscape and discover a wider group of vendors in The Modern Application Development Services Landscape, Q3 2024. This most recent MAD services Wave’s analysis focuses on medium and large vendors compared to our previous Wave evaluation on the same market, in which the emphasis was on smaller ones. But not every company from the landscape report met the stringent criteria for inclusion in the Wave, which were: Significant peer recognition. These were providers most frequently cited in client bids. Forrester mindshare. This entails the service providers that were referenced more during briefings, inquiries, or research projects over the last year. MAD capabilities. The Wave’s vendors offer comprehensive and differentiating sets of MAD capabilities or, in Forrester’s view, unique capabilities that warrant inclusion. Global MAD services revenue of at least US$450 million. The included vendors have global MAD services revenue of US$450 million in at least two of the North America, LATAM, EMEA, or APAC regions combined. What Distinguishes The Leaders, Strong Performers, And Contenders? Our Wave methodology categorizes vendors into three groups, Leaders, Strong Performers, and Contenders, based on a range of services that we evaluated: agile, DevOps, microservices architecture, cloud services, and more advanced services such as site reliability engineering, project-to-product capabilities, AI and generative AI architecture services, and the testing and development of AI-infused applications. Showing differentiation in all these services was key to our evaluation. Reference clients, case studies, and other evidence also played a critical role in our analysis. After all, it’s the provider’s ability to enhance your team’s skills in new technologies and practices that truly differentiates MAD services from traditional ADMS or AMS services. We encourage readers not to dismiss any provider without first examining the detailed descriptions of strategy, capabilities, and client feedback in our Wave report. Download the accompanying Excel file for a breakdown of the questions, scoring, and criteria grading. For more information, feedback, or questions, email me at [email protected], or if you’re a Forrester client, schedule a guidance session or inquiry. I’m here to assist! source

Announcing The Forrester Wave™: Modern Application Development Services, Q1 2025 Read More »