OpenAI’s o1 model doesn’t show its thinking, giving open source an advantage

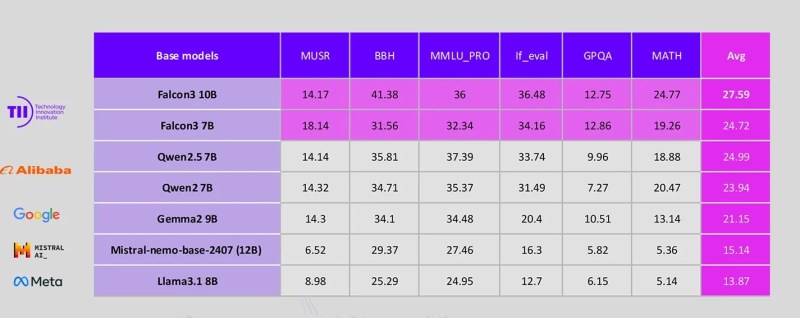

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More OpenAI has ushered in a new reasoning paradigm in large language models (LLMs) with its o1 model, which recently got a major upgrade. However, while OpenAI has a strong lead in reasoning models, it might lose some ground to open source rivals that are quickly emerging. Models like o1, sometimes referred to as large reasoning models (LRMs), use extra inference-time compute cycles to “think” more, review their responses and correct their answers. This enables them to solve complex reasoning problems that classic LLMs struggle with and makes them especially useful for tasks such as coding, math and data analysis. However, in recent days, developers have shown mixed reactions to o1, especially after the updated release. Some have posted examples of o1 accomplishing incredible tasks while others have expressed frustration over the model’s confusing responses. Developers have experienced all kinds of problems from making illogical changes to code or ignoring instructions. Secrecy around o1 details Part of the confusion is due to OpenAI’s secrecy and refusal to show the details of how o1 works. The secret sauce behind the success of LRMs is the extra tokens that the model generates as it reaches the final response, referred to as the model’s “thoughts” or “reasoning chain.” For example, if you prompt a classic LLM to generate code for a task, it will immediately generate the code. In contrast, an LRM will generate reasoning tokens that examine the problem, plan the structure of code, and generate multiple solutions before emitting the final answer. o1 hides the thinking process and only shows the final response along with a message that displays how long the model thought and possibly a high overview of the reasoning process. This is partly to avoid cluttering the response and providing a smoother user experience. But more importantly, OpenAI considers the reasoning chain as a trade secret and wants to make it difficult for competitors to replicate o1’s capabilities. The costs of training new models continue to grow and profit margins are not keeping pace, which is pushing some AI labs to become more secretive in order to extend their lead. Even Apollo research, which did the red-teaming of the model, was not given access to its reasoning chain. This lack of transparency has led users to make all kinds of speculations, including accusing OpenAI of degrading the model to cut inference costs. Open-source models fully transparent On the other hand, open source alternatives such as Alibaba’s Qwen with Questions and Marco-o1 show the full reasoning chain of their models. Another alternative is DeepSeek R1, which is not open source but still reveals the reasoning tokens. Seeing the reasoning chain enables developers to troubleshoot their prompts and find ways to improve the model’s responses by adding additional instructions or in-context examples. Visibility into the reasoning process is especially important when you want to integrate the model’s responses into applications and tools that expect consistent results. Moreover, having control over the underlying model is important in enterprise applications. Private models and the scaffolding that supports them, such as the safeguards and filters that test their inputs and outputs, are constantly changing. While this may result in better overall performance, it can break many prompts and applications that were built on top of them. In contrast, open source models give full control of the model to the developer, which can be a more robust option for enterprise applications, where performance on very specific tasks is more important than general skills. QwQ and R1 are still in preview versions and o1 has the lead in terms of accuracy and ease of use. And for many uses, such as making general ad hoc prompts and one-time requests, o1 can still be a better option than the open source alternatives. But the open-source community is quick to catch up with private models and we can expect more models to emerge in the coming months. They can turn into a suitable alternative where visibility and control are crucial. source

OpenAI’s o1 model doesn’t show its thinking, giving open source an advantage Read More »