Is your AI product actually working? How to develop the right metric system

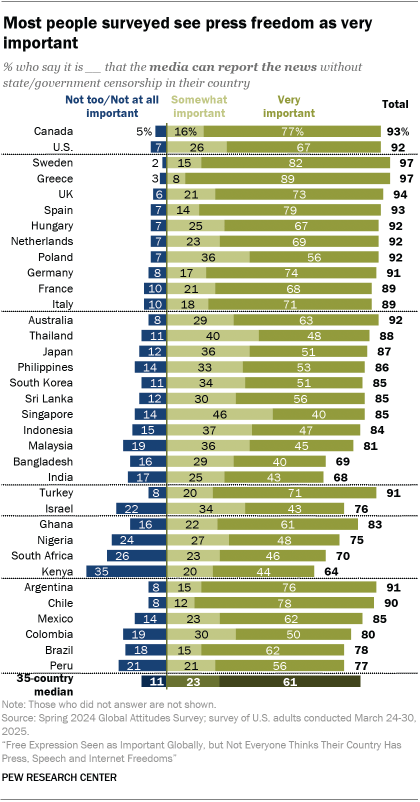

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More In my first stint as a machine learning (ML) product manager, a simple question inspired passionate debates across functions and leaders: How do we know if this product is actually working? The product in question that I managed catered to both internal and external customers. The model enabled internal teams to identify the top issues faced by our customers so that they could prioritize the right set of experiences to fix customer issues. With such a complex web of interdependencies among internal and external customers, choosing the right metrics to capture the impact of the product was critical to steer it towards success. Not tracking whether your product is working well is like landing a plane without any instructions from air traffic control. There is absolutely no way that you can make informed decisions for your customer without knowing what is going right or wrong. Additionally, if you do not actively define the metrics, your team will identify their own back-up metrics. The risk of having multiple flavors of an ‘accuracy’ or ‘quality’ metric is that everyone will develop their own version, leading to a scenario where you might not all be working toward the same outcome. For example, when I reviewed my annual goal and the underlying metric with our engineering team, the immediate feedback was: “But this is a business metric, we already track precision and recall.” First, identify what you want to know about your AI product Once you do get down to the task of defining the metrics for your product — where to begin? In my experience, the complexity of operating an ML product with multiple customers translates to defining metrics for the model, too. What do I use to measure whether a model is working well? Measuring the outcome of internal teams to prioritize launches based on our models would not be quick enough; measuring whether the customer adopted solutions recommended by our model could risk us drawing conclusions from a very broad adoption metric (what if the customer didn’t adopt the solution because they just wanted to reach a support agent?). Fast-forward to the era of large language models (LLMs) — where we don’t just have a single output from an ML model, we have text answers, images and music as outputs, too. The dimensions of the product that require metrics now rapidly increases — formats, customers, type … the list goes on. Across all my products, when I try to come up with metrics, my first step is to distill what I want to know about its impact on customers into a few key questions. Identifying the right set of questions makes it easier to identify the right set of metrics. Here are a few examples: Did the customer get an output? → metric for coverage How long did it take for the product to provide an output? → metric for latency Did the user like the output? → metrics for customer feedback, customer adoption and retention Once you identify your key questions, the next step is to identify a set of sub-questions for ‘input’ and ‘output’ signals. Output metrics are lagging indicators where you can measure an event that has already happened. Input metrics and leading indicators can be used to identify trends or predict outcomes. See below for ways to add the right sub-questions for lagging and leading indicators to the questions above. Not all questions need to have leading/lagging indicators. Did the customer get an output? → coverage How long did it take for the product to provide an output? → latency Did the user like the output? → customer feedback, customer adoption and retention Did the user indicate that the output is right/wrong? (output) Was the output good/fair? (input) The third and final step is to identify the method to gather metrics. Most metrics are gathered at-scale by new instrumentation via data engineering. However, in some instances (like question 3 above) especially for ML based products, you have the option of manual or automated evaluations that assess the model outputs. While it’s always best to develop automated evaluations, starting with manual evaluations for “was the output good/fair” and creating a rubric for the definitions of good, fair and not good will help you lay the groundwork for a rigorous and tested automated evaluation process, too. Example use cases: AI search, listing descriptions The above framework can be applied to any ML-based product to identify the list of primary metrics for your product. Let’s take search as an example. Question Metrics Nature of Metric Did the customer get an output? → Coverage % search sessions with search results shown to customer Output How long did it take for the product to provide an output? → Latency Time taken to display search results for the user Output Did the user like the output? → Customer feedback, customer adoption and retention Did the user indicate that the output is right/wrong? (Output) Was the output good/fair? (Input) % of search sessions with ‘thumbs up’ feedback on search results from the customer or % of search sessions with clicks from the customer % of search results marked as ‘good/fair’ for each search term, per quality rubric Output Input How about a product to generate descriptions for a listing (whether it’s a menu item in Doordash or a product listing on Amazon)? Question Metrics Nature of Metric Did the customer get an output? → Coverage % listings with generated description Output How long did it take for the product to provide an output? → Latency Time taken to generate descriptions to the user Output Did the user like the output? → Customer feedback, customer adoption and retention Did the user indicate that the output is right/wrong? (Output) Was the output good/fair? (Input) % of listings with generated descriptions that required edits from the technical content team/seller/customer % of listing descriptions marked as ‘good/fair’, per

Is your AI product actually working? How to develop the right metric system Read More »