Nvidia’s new Llama-3.1 Nemotron Ultra outperforms DeepSeek R1 at half the size

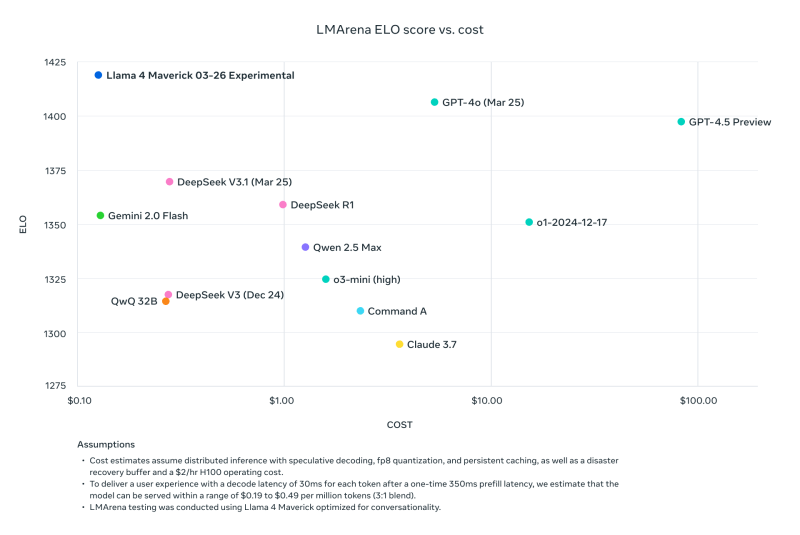

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Even as Meta fends off questions and criticisms of its new Llama 4 model family, graphics processing unit (GPU) master Nvidia has released a new, fully open source large language model (LLM) based on Meta’s older model Llama-3.1-405B-Instruct model and it’s claiming near top performance on a variety of third-party benchmarks — outperforming the vaunted rival DeepSeek R1 open source reasoning model. Llama-3.1-Nemotron-Ultra-253B-v1, is a dense 253-billion parameter designed to support advanced reasoning, instruction following, and AI assistant workflows. It was first mentioned back at Nvidia’s annual GPU Technology Conference (GTC) in March. The release reflects Nvidia continued focus on performance optimization through architectural innovation and targeted post-training. Announced last night, April 7, 2025, the model code is now publicly available on Hugging Face, with open weights and post-training data. It is designed to operate efficiently in both “reasoning on” and “reasoning off” modes, allowing developers to toggle between high-complexity reasoning tasks and more straightforward outputs based on system prompts. Designed for efficient inference The Llama-3.1-Nemotron-Ultra-253B builds on Nvidia’s previous work in inference-optimized LLM development. Its architecture—customized through a Neural Architecture Search (NAS) process—introduces structural variations such as skipped attention layers, fused feedforward networks (FFNs), and variable FFN compression ratios. This architectural overhaul reduces memory footprint and computational demands without severely impacting output quality, enabling deployment on a single 8x H100 GPU node. The result, according to Nvidia, is a model that offers strong performance while being more cost-effective to deploy in data center environments. Additional hardware compatibility includes support for Nvidia’s B100 and Hopper microarchitectures, with configurations validated in both BF16 and FP8 precision modes. Post-training for reasoning and alignment Nvidia enhanced the base model through a multi-phase post-training pipeline. This included supervised fine-tuning across domains such as math, code generation, chat, and tool use, followed by reinforcement learning with Group Relative Policy Optimization (GRPO) to further boost instruction-following and reasoning performance. The model underwent a knowledge distillation phase over 65 billion tokens, followed by continual pretraining on an additional 88 billion tokens. Training datasets included sources like FineWeb, Buzz-V1.2, and Dolma. Post-training prompts and responses were drawn from a combination of public corpora and synthetic generation methods, including datasets that taught the model to differentiate between its reasoning modes. Improved performance across numerous domains and benchmarks Evaluation results show notable gains when the model operates in reasoning-enabled mode. For instance, on the MATH500 benchmark, performance increased from 80.40% in standard mode to 97.00% with reasoning enabled. Similarly, results on the AIME25 benchmark rose from 16.67% to 72.50%, and LiveCodeBench scores more than doubled, jumping from 29.03% to 66.31%. Performance gains were also observed in tool-based tasks like BFCL V2 and function composition, as well as in general question answering (GPQA), where the model scored 76.01% in reasoning mode versus 56.60% without. These benchmarks were conducted with a maximum sequence length of 32,000 tokens, and each test was repeated up to 16 times to ensure accuracy. Compared to DeepSeek R1, a state-of-the-art MoE model with 671 billion total parameters, Llama-3.1-Nemotron-Ultra-253B shows competitive results despite having less than half the number of parameters (model settings) — outperforming in tasks like GPQA (76.01 vs. 71.5), IFEval instruction following (89.45 vs. 83.3), and LiveCodeBench coding tasks (66.31 vs. 65.9). Meanwhile, DeepSeek R1 holds a clear advantage on certain math evaluations, particularly AIME25 (79.8 vs. 72.50), and slightly edges out MATH500 (97.3 vs. 97.00). These results suggest that despite being a dense model, Nvidia’s offering matches or exceeds MoE alternatives on reasoning and general instruction alignment tasks, while trailing slightly in math-heavy categories. Usage and integration The model is compatible with the Hugging Face Transformers library (version 4.48.3 recommended) and supports input and output sequences up to 128,000 tokens. Developers can control reasoning behavior via system prompts and select decoding strategies based on task requirements. For reasoning tasks, Nvidia recommends using temperature sampling (0.6) with a top-p value of 0.95. For deterministic outputs, greedy decoding is preferred. Llama-3.1-Nemotron-Ultra-253B supports multilingual applications, with capabilities in English and several additional languages, including German, French, Italian, Portuguese, Hindi, Spanish, and Thai. It is also suitable for common LLM use cases such as chatbot development, AI agent workflows, retrieval-augmented generation (RAG), and code generation. Licensed for commercial use Released under the Nvidia Open Model License and governed by the Llama 3.1 Community License Agreement, the model is ready for commercial use. Nvidia has emphasized the importance of responsible AI development, encouraging teams to evaluate the model’s alignment, safety, and bias profiles for their specific use cases. Oleksii Kuchaiev, Director of AI Model Post-Training at Nvidia, shared the announcement on X, stating that the team was excited to share the open release, describing it as a dense 253B model designed with toggle ON/OFF reasoning capabilities and released with open weights and data. source

Nvidia’s new Llama-3.1 Nemotron Ultra outperforms DeepSeek R1 at half the size Read More »