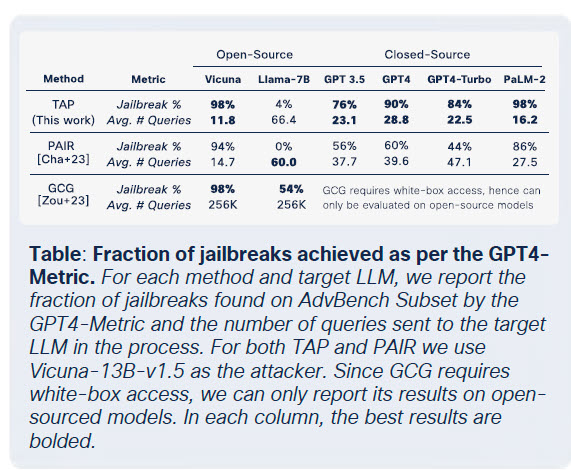

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More Weaponized large language models (LLMs) fine-tuned with offensive tradecraft are reshaping cyberattacks, forcing CISOs to rewrite their playbooks. They’ve proven capable of automating reconnaissance, impersonating identities and evading real-time detection, accelerating large-scale social engineering attacks. Models, including FraudGPT, GhostGPT and DarkGPT, retail for as little as $75 a month and are purpose-built for attack strategies such as phishing, exploit generation, code obfuscation, vulnerability scanning and credit card validation. Cybercrime gangs, syndicates and nation-states see revenue opportunities in providing platforms, kits and leasing access to weaponized LLMs today. These LLMs are being packaged much like legitimate businesses package and sell SaaS apps. Leasing a weaponized LLM often includes access to dashboards, APIs, regular updates and, for some, customer support. VentureBeat continues to track the progression of weaponized LLMs closely. It’s becoming evident that the lines are blurring between developer platforms and cybercrime kits as weaponized LLMs’ sophistication continues to accelerate. With lease or rental prices plummeting, more attackers are experimenting with platforms and kits, leading to a new era of AI-driven threats. Legitimate LLMs in the cross-hairs The spread of weaponized LLMs has progressed so quickly that legitimate LLMs are at risk of being compromised and integrated into cybercriminal tool chains. The bottom line is that legitimate LLMs and models are now in the blast radius of any attack. The more fine-tuned a given LLM is, the greater the probability it can be directed to produce harmful outputs. Cisco’s The State of AI Security Report reports that fine-tuned LLMs are 22 times more likely to produce harmful outputs than base models. Fine-tuning models is essential for ensuring their contextual relevance. The trouble is that fine-tuning also weakens guardrails and opens the door to jailbreaks, prompt injections and model inversion. Cisco’s study proves that the more production-ready a model becomes, the more exposed it is to vulnerabilities that must be considered in an attack’s blast radius. The core tasks teams rely on to fine-tune LLMs, including continuous fine-tuning, third-party integration, coding and testing, and agentic orchestration, create new opportunities for attackers to compromise LLMs. Once inside an LLM, attackers work fast to poison data, attempt to hijack infrastructure, modify and misdirect agent behavior and extract training data at scale. Cisco’s study infers that without independent security layers, the models teams work so diligently on to fine-tune aren’t just at risk; they’re quickly becoming liabilities. From an attacker’s perspective, they’re assets ready to be infiltrated and turned. Fine-Tuning LLMs dismantles safety controls at scale A key part of Cisco’s security team’s research centered on testing multiple fine-tuned models, including Llama-2-7B and domain-specialized Microsoft Adapt LLMs. These models were tested across a wide variety of domains including healthcare, finance and law. One of the most valuable takeaways from Cisco’s study of AI security is that fine-tuning destabilizes alignment, even when trained on clean datasets. Alignment breakdown was the most severe in biomedical and legal domains, two industries known for being among the most stringent regarding compliance, legal transparency and patient safety. While the intent behind fine-tuning is improved task performance, the side effect is systemic degradation of built-in safety controls. Jailbreak attempts that routinely failed against foundation models succeeded at dramatically higher rates against fine-tuned variants, especially in sensitive domains governed by strict compliance frameworks. The results are sobering. Jailbreak success rates tripled and malicious output generation soared by 2,200% compared to foundation models. Figure 1 shows just how stark that shift is. Fine-tuning boosts a model’s utility but comes at a cost, which is a substantially broader attack surface. TAP achieves up to 98% jailbreak success, outperforming other methods across open- and closed-source LLMs. Source: Cisco State of AI Security 2025, p. 16. Malicious LLMs are a $75 commodity Cisco Talos is actively tracking the rise of black-market LLMs and provides insights into their research in the report. Talos found that GhostGPT, DarkGPT and FraudGPT are sold on Telegram and the dark web for as little as $75/month. These tools are plug-and-play for phishing, exploit development, credit card validation and obfuscation. DarkGPT underground dashboard offers “uncensored intelligence” and subscription-based access for as little as 0.0098 BTC—framing malicious LLMs as consumer-grade SaaS.Source: Cisco State of AI Security 2025, p. 9. Unlike mainstream models with built-in safety features, these LLMs are pre-configured for offensive operations and offer APIs, updates, and dashboards that are indistinguishable from commercial SaaS products. $60 dataset poisoning threatens AI supply chains “For just $60, attackers can poison the foundation of AI models—no zero-day required,” write Cisco researchers. That’s the takeaway from Cisco’s joint research with Google, ETH Zurich and Nvidia, which shows how easily adversaries can inject malicious data into the world’s most widely used open-source training sets. By exploiting expired domains or timing Wikipedia edits during dataset archiving, attackers can poison as little as 0.01% of datasets like LAION-400M or COYO-700M and still influence downstream LLMs in meaningful ways. The two methods mentioned in the study, split-view poisoning and frontrunning attacks, are designed to leverage the fragile trust model of web-crawled data. With most enterprise LLMs built on open data, these attacks scale quietly and persist deep into inference pipelines. Decomposition attacks quietly extract copyrighted and regulated content One of the most startling discoveries Cisco researchers demonstrated is that LLMs can be manipulated to leak sensitive training data without ever triggering guardrails. Cisco researchers used a method called decomposition prompting to reconstruct over 20% of select New York Times and Wall Street Journal articles. Their attack strategy broke down prompts into sub-queries that guardrails classified as safe, then reassembled the outputs to recreate paywalled or copyrighted content. Successfully evading guardrails to access proprietary datasets or licensed content is an attack vector every enterprise is grappling to protect today. For those that have LLMs trained on proprietary datasets or licensed content, decomposition attacks can be particularly devastating. Cisco explains that the breach isn’t happening at the input level, it’s emerging from the models’ outputs. That