Borderless AI emerges from stealth with $32M in funding to disrupt HR tech

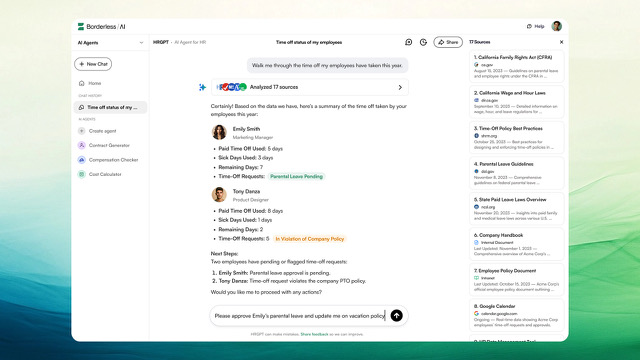

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More A new artificial intelligence startup is betting that HR departments will become the next major battleground for enterprise AI adoption, launching a specialized search engine that aims to transform how companies manage their workforce. Borderless AI, which emerged from stealth last year, announced today the release of HRGPT, a free AI-powered search engine that allows companies to query their internal HR data alongside employment laws and regulations. The company also disclosed a $5 million strategic investment from AI company Cohere, bringing its total seed funding to $32 million. “Every HR department is going to have AI agents that manage various aspects across the HR stack,” said Willson Cross, cofounder and CEO of Borderless AI, in an exclusive interview with VentureBeat. “We’re proud to be at the forefront of that vertical.” How Borderless AI’s HRGPT is transforming workforce management The Toronto-based startup is positioning itself to compete with established HR software providers like Workday and ADP by focusing exclusively on AI-powered solutions. Its platform already counts several multinational companies as customers, including Dunlop Sporting Goods, which uses the technology to manage employee onboarding across 17 global offices. Unlike general-purpose AI chatbots, HRGPT combines real-time web search with access to internal company data and specialized HR knowledge. The system can perform tasks ranging from generating employment agreements to tracking time-off requests and managing international expense reimbursements. “Unlike ChatGPT, we have real-time web search. When a customer asks HRGPT a question, it scans the web for real-time sourcing and citations,” Cross told VentureBeat. The platform also integrates with PricewaterhouseCoopers for employment law expertise. Borderless AI’s platform displays employee time-off requests and compliance data in a conversational interface designed for HR professionals. (Credit: Borderless AI) The investment from Cohere signals growing interest in vertical-specific AI applications for the enterprise. While consumer AI tools like ChatGPT have captured public attention, Cross believes the next wave of AI adoption will come from businesses. “For the next two to three years, it’s going to be the businesses that are catching up and waking up to bringing AI to their organizations,” he said. “HR is one that has many applicable use cases.” Borderless AI’s approach reflects a broader trend of AI companies focusing on specific industries rather than trying to build general-purpose tools. Similar vertical-focused companies include Harvey AI in legal tech and Sierra in customer service. Building a billion-dollar HR tech company with AI at its core The company’s ambitious vision includes automating complex HR processes like payroll management and employee analytics. Cross indicated they aim to build a billion-dollar company with fewer than 50 employees by leveraging AI extensively in their own operations. However, Borderless AI faces significant challenges, including prioritizing which features to build next amid strong customer demand. The company must also maintain accuracy and compliance in its automated HR functions, particularly for sensitive tasks like employment agreements and international payments. The startup’s success could signal whether specialized AI tools will successfully compete against established enterprise software providers who are racing to add AI capabilities to their existing products. For now, early customers appear convinced: Borderless AI reports that its AI agents perform tasks hourly across its customer base. source

Borderless AI emerges from stealth with $32M in funding to disrupt HR tech Read More »