OpenAI launches ChatGPT desktop integrations, rivaling Copilot

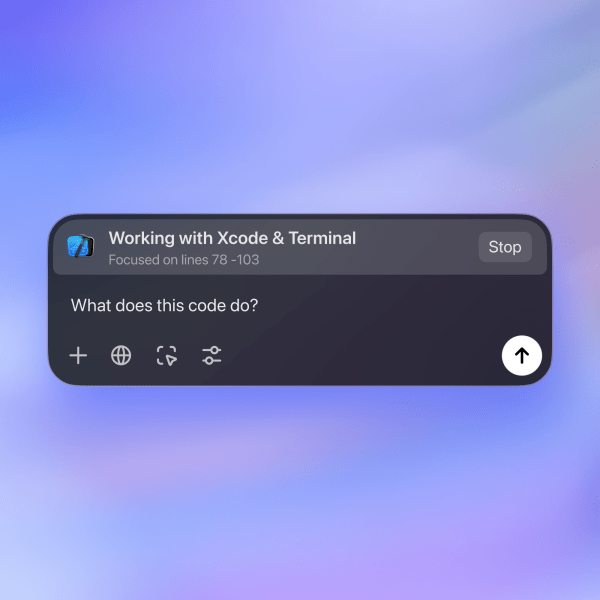

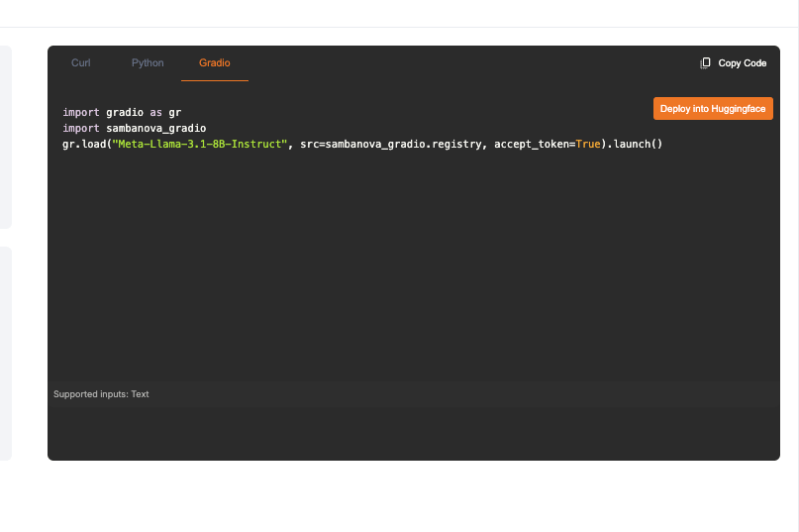

Join our daily and weekly newsletters for the latest updates and exclusive content on industry-leading AI coverage. Learn More When OpenAI released desktop app versions of ChatGPT, it was clear the goal was to get more users to bring ChatGPT into their daily workflows. Now, new updates to Mac OS and Windows PC versions encourage users to stay in the ChatGPT apps for most of their tasks. Some ChatGPT on Mac OS users can now open third-party applications directly from the app. ChatGPT Plus and Teams subscribers — with ChatGPT Enterprise and Edu users following soon after — can access VS Code, Xcode, Terminal and iTerm2 from a dropdown. This kind of integration calls to mind GitHub Copilot’s integration with coding platforms announced in October. Alexander Embiricos, product lead with the ChatGPT desktop team, said one of the biggest user behaviors the company saw was copy-pasting text or code generation with ChatGPT to other applications. Embiricos was the CEO of Multi, a screen sharing and collaboration startup acquired by OpenAI in June. “We wanted to start integration with [integrated development environments] IDEs because we know a lot of our customers are developers, as we were seeing a lot of copy-pasting text-based material from the app to other platforms,” Embiricos said. He added that OpenAI wanted to focus on privacy while building the integrations, so the third-party apps would only open manually. Users can begin coding with ChatGPT and choose VS Code from the app. Once launched, VS Code will open with the same code that they were working on. Embiricos said theoretically, people can have multiple third-party apps open while using ChatGPT on Mac. Right now, third-party app integration is only available on Mac OS, but Embiricos said PC users will also get the feature eventually. OpenAI also plans to expand the number of apps in the future. Windows PC is not left behind The Windows PC version of the ChatGPT desktop app will now be available for download to all ChatGPT users, following the limited release to subscribers. Along with expanding the user base, OpenAI updated the PC app with access to Advanced Voice Mode and screenshot capabilities. Embiricos said customers have asked them to use Advanced Voice Mode on desktop for a while, so they wanted to focus on the feature for the PC app. The screenshot capability will also take advantage of some specific features in Windows machines, which will let users choose which windows to take a photo of. “ChatGPT can understand what you’re describing to it, of course, but if you add a photo to your chat, its responses are richer, and we see a lot of users copy-pasting photos into ChatGPT so adding a screenshot option makes that easier,” Embiricos said. Many of the features in the Mac OS desktop app will also come to PC, but Embiricos noted that the team focused on making the PC app more widely available first. Interfaces are the new battle ground Chat interfaces like ChatGPT proved incredibly useful to a variety of users, but before the advent of desktop versions, people had to go to a website to generate text or code or photos and have to bring chat responses to whichever application they’re doing actual work with. So it’s no surprise that companies like OpenAI want to capture more of their customer base by bringing their workflows closer to their interface. GitHub made this possible with its integrations with VS Code and Xcode. Anthropic’s Claude, while not integrated with third-party apps, created Artifacts so users don’t have to go elsewhere to see what their generated webpage looks like. OpenAI followed suit with Canvas, which functions similarly. Meanwhile, Amazon Web Services (AWS) just made its Q Developer AI assistant integrated into popular IDEs Visual Studio Code and JetBrains as an in-line suggestions and code completion add-on, allowing them to highlight chunks of their code and type instructions directly into the LLM without toggling over to another screen. App integration is nothing new for software, as many companies often work together to bring services to where users are. For example, Slack includes apps from Zoom, Atlassian, Asana, and Google that people can call up within a chat window. source

OpenAI launches ChatGPT desktop integrations, rivaling Copilot Read More »